Instagram is one of the world’s largest social media networks, with about 1.21 billion users as of 2021, or about 28% of the internet, according to Statista.

This article is a guide on how to programmatically download Instagram data from a profile using Python in two methods. The first method is downloading media using Instaloader. The second is writing a simple Python script to get JSON data about the profile.

It is important to note that scraping data may violate Instagram’s terms of service, and we recommend that you only download data from your account.

Using Instaloader

Instaloader is a Python package for downloading Instagram media. It is incredibly easy to use and makes extracting and downloading data quick and easy. To begin using Instaloader, first, install it using pip:

pip install instaloaderOnce installed, you can use it from its command line interface or as a package in a Python script.

To use it from the command line, you use the instaloader command. For example, to display help information, you enter the following command in your terminal:

instaloader --helpTo download the profile picture of a user, you enter the command with a --profile tag, followed by the username. Like so:

instaloader --profile <USERNAME OF THE PROFILE>But for this command to work, you need to log in first. To do so, you pass in the login option so:

instaloader --login <YOUR USERNAME> --profile <USERNAME OF THE PROFILE>What to Download

With Instaloader, you can download different media. This extract of the manual page shows you all the different things you can download:

profile Download profile. If an already-downloaded profile has been renamed, Instaloader automatically finds it by its unique

ID and renames the folder likewise.

@profile Download all followees of profile. Requires --login. Consider using :feed rather than @yourself.

"#hashtag" Download #hashtag.

%location_id Download %location_id. Requires --login.

:feed Download pictures from your feed. Requires --login.

:stories Download the stories of your followees. Requires --login.

:saved Download the posts that you marked as saved. Requires --login.

-- -shortcode Download the post with the given shortcode

filename.json[.xz] Re-Download the given object.

+args.txt Read targets (and options) from given textfile.

To download the posts of a particular user, you would enter the command:

instaloader --login <YOUR USERNAME> <TARGET USERNAME>In this case, your username is the username of your authenticated Instagram account; the target username is the profile whose posts you want to download.

To download posts from the followers of a profile, you would enter the command:

instaloader --login <YOUR USERNAME> @<TARGET USERNAME>Note the difference between this command and the one before is the @ before the target username.

An alternative to using the Instaloader command line interface is to use it as a Python package. The package is well documented here.

With Instaloader, you can download different media files. However, if you wanted to extract metadata such as a user’s bio page, Instaloader alone would not be enough. With the next method, you will write a Python Script to extract a user profile’s data.

Writing a Python Script to Download Instagram Data

Overview

In this method, we will write a simple script to download Instagram data in Python. This method relies on using a relatively unknown Instagram JSON API for extracting data from public profiles.

The way this API works is if you append the query __a=1&__d=1 to the end of your profile URL, Instagram responds with JSON data about the profile.

For example, my username is 0xanesu. As a result, if I make a request to https://instagram.com/instagram/?__a=1&__d=1, I will get back JSON data about my profile as a response.

Writing the Script

To make the request in Python, we are going to be using the Python requests module. However, you can also use pycURL, urllib, or any other client library you prefer to use to make HTTP requests. To begin, install the requests module using pip.

pip install requestsOnce that has been installed, open a file to write your script in and import the get function from the requests module. In addition, also import the loads function from json. This will be used to parse the JSON response.

from requests import get

from json import loadsOnce you have imported the data, create a variable that stores the URL to your Instagram profile.

url = 'https://instagram.com/<YOUR USERNAME HERE>'As mentioned before, in order to extract Instagram data from a profile, you need to add the __a=1 and __d=1 query parameters. To define those, we create a dictionary object with the parameters.

params = { '__a': 1, '__d': 1 }To authorize requests we make, Instagram requires a Session ID. Later on, I will show you how to get your Session ID. For now, just put a placeholder value that you will substitute later.

cookies = { 'sessionid': '<YOUR SESSION ID HERE>' }Next, define a function that will run when the request is successful.

def on_success(response):

profile_data_json = response.text

parsed_data = loads(profile_data_json)

print('User fullname:', parsed_data['graphql']['user']['full_name'])

print('User bio:', parsed_data['graphql']['user']['biography'])The function I have defined will take in the response object, extract the JSON from the response body and then parse the JSON into an object. After this, I am only extracting the full name and biography of the profile.

Next, define the function that will run if there is an error.

def on_error(response):

# Printing the error if something went wrong

print('Something went wrong')

print('Error Code:', response.status_code)

print('Reason:', response.reason)Then we call the get function to make the request, passing in the URL, params, and cookies as arguments.

response = get(url, params, cookies=cookies)Then lastly, we check the status code of the error. If the status is 200, we call the on_success function. Else we just call the on_error function.

if response.status_code == 200:

on_success(response)

else:

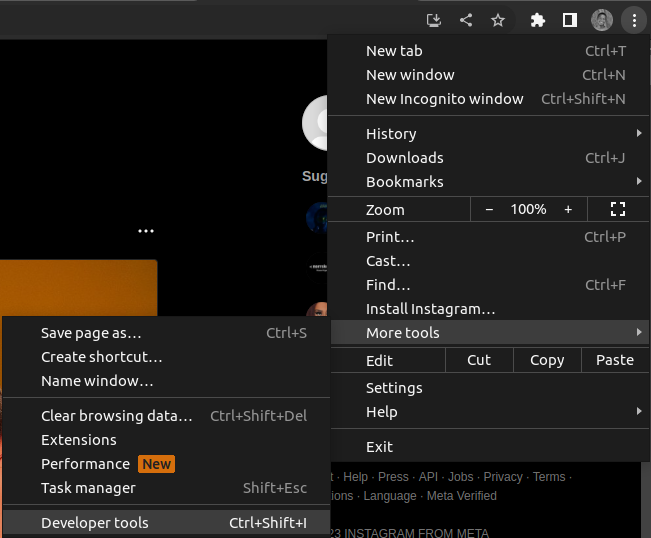

on_error(response)At this point, we are done writing the code. What’s left is to get the sessionid. To get the session id, open your Google Chrome and open Instagram on Web. Ensure you are logged in, then open Dev Tools using Ctrl + Shift + I or Cmd +Shift + I.

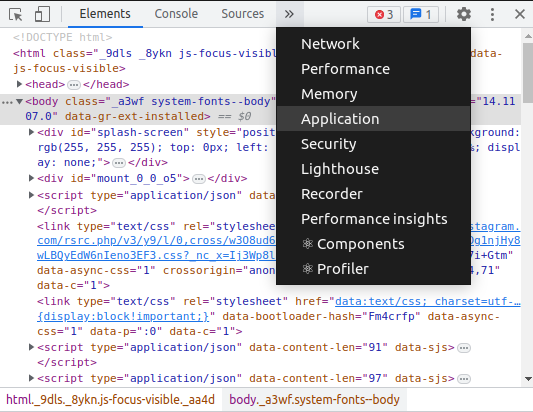

With Dev Tools open, open the Application tab.

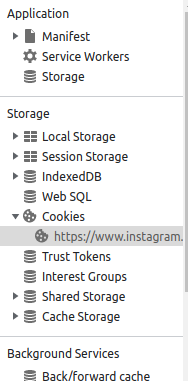

Then click the Cookies submenu to view Cookies used by Instagram.

After which, copy the value of the sessionid cookie from the list of cookies that will be listed in the Dev Tools panel.

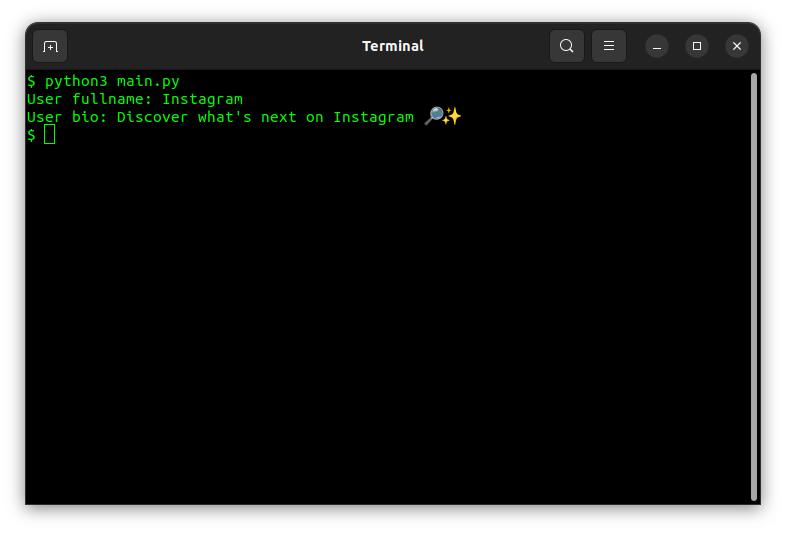

Once you copy the session id, paste it into the script and execute the script. In my case, using Instagram as the username (https://instgram.com/instagram?__a=1&__d=1), this is the output.

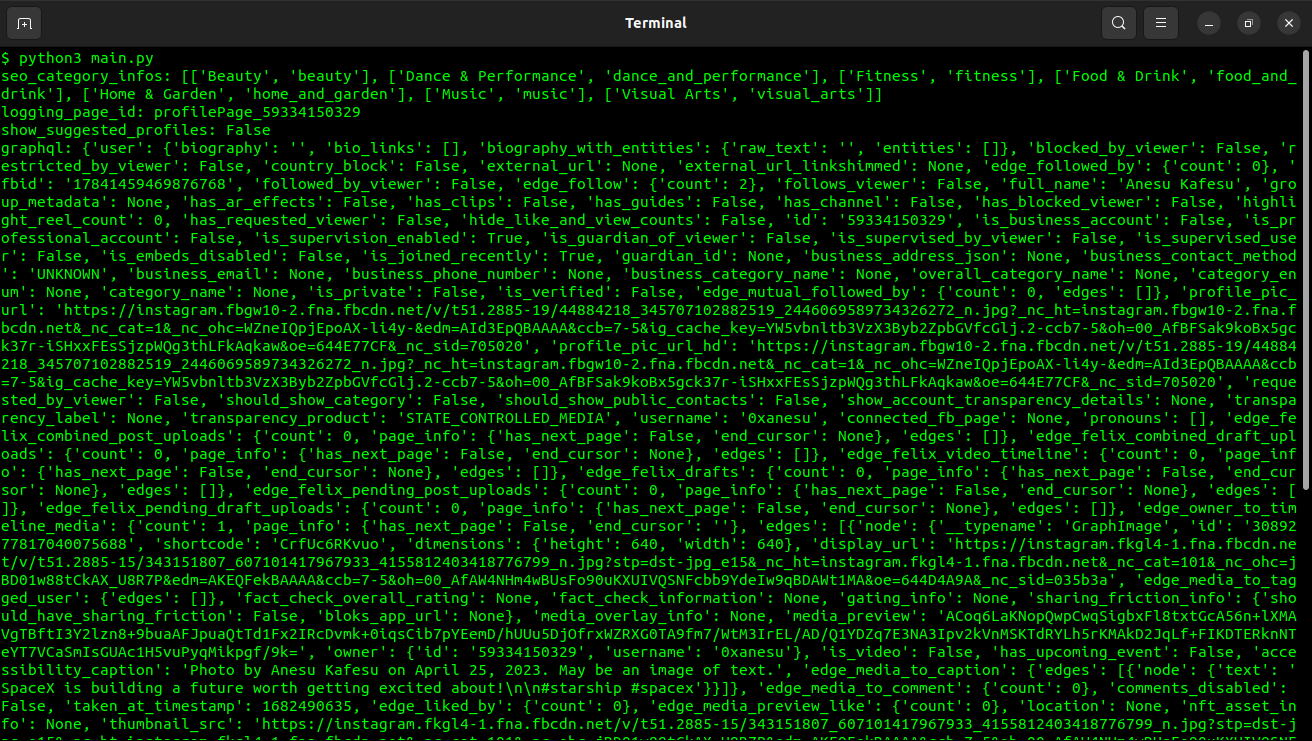

And just like that, we are able to dynamically download profile data. There is so much more data that is returned from the JSON API. This is the output when you print all of it:

And that is how you extract data and posts from Instagram profiles.

Final Words

In this article, we went through how to download posts and media using Instaloader. We then wrote a custom script to extract profile JSON data that includes so much more than just the media content. If you enjoyed this project, you might want to check out our post on the Python Timeit to Time Your Code.

If you are interested in getting more out of your Instagram experience, check out our post on Qoob Stories: a detailed review on the Instagram downloader.