If you’re familiar with deep learning, you’ll have likely heard the phrase PyTorch vs. TensorFlow more than once.

PyTorch and TensorFlow are two of the most popular deep learning frameworks. This guide presents a comprehensive overview of the salient features of these two frameworks—to help you decide which framework to use—for your next deep learning project.

In this article, we’ll first introduce the two frameworks: PyTorch and TensorFlow. And then sum up the features they offer.

Let’s begin!

What Is PyTorch?

PyTorch is an open-source framework for building machine learning and deep learning models for various applications, including natural language processing and machine learning.

It’s a Pythonic framework developed by Meta AI (than Facebook AI) in 2016, based on Torch, a package written in Lua.

Recently, Meta AI released PyTorch 2.0. The new release offers better support for distributed training, model compilation, and graph neural networks (GNNs), amongst others.

What Is TensorFlow?

Introduced in 2014, TensorFlow is an open-source end-to-end machine learning framework by Google. It comes packed with features for data preparation, model deployment, and MLOps.

With TensorFlow, you get cross-platform development support and out-of-the-box support for all stages in the machine learning lifecycle.

PyTorch vs. TensorFlow

Both PyTorch and TensorFlow are super popular frameworks in the deep learning community. For most applications that you want to work on, both these frameworks provide built-in support.

Here, we’ll summarize the key features of both PyTorch and TensorFlow and also identify use cases where you might prefer one framework over the other.

#1. Library of Datasets and Pretrained Models

A deep learning framework should come with batteries included. Oftentimes, you wouldn’t want to code a model from scratch. Rather you can leverage pre-trained models and fine-tune them to your use case.

Similarly, we’d want commonly used datasets to be readily available. This would enable us to build experimental models quickly without having to set up a data collection pipeline or import and clean data from other sources.

To this end, we’d want these frameworks to come with both datasets and pretrained models so we can get a baseline model much faster.

PyTorch Datasets and Models

PyTorch has libraries such as torchtext, torchaudio, and torchvision for NLP, audio, and image processing tasks, respectively. So when you’re working with PyTorch, you can leverage the datasets and models provided by these libraries, including:

torchtext.datasetsandtorchtext.modelsfor datasets and processing for natural language processing taskstorchvision.datasetsandtorchvision.modelsprovide image datasets and pretrained models for computer vision taskstorchaudio.datasetsandtorchaudio.modelsfor datasets and pretrained model weights and utilities for machine learning on audio

TensorFlow Datasets and Models

- TensorFlow datasets (official) include datasets that you can readily use with TensorFlow

- TensorFlow Model Hub and Model Garden have pre-trained models available for use across multiple domains

Additionally, you can look for both PyTorch and TensorFlow models in the HuggingFace Model Hub.

#2. Support for Deployment

In the PyTorch vs. TensorFlow debate, support for deployment often takes center stage.

A machine learning model that works great in your local development environment is a good starting point. However, to derive value from machine learning models, it’s important to deploy them to production and monitor them continuously.

In this section, we’ll take a look at features that both PyTorch and TensorFlow offer to deploy machine learning models to production.

TensorFlow Extended (TFX)

TensorFlow Extended, abbreviated as tfx, is a deployment framework that is based on TensorFlow. It provides functionality that helps you orchestrate and maintain machine learning pipelines. It provides features for data validation and data transformation, amongst others.

With TensorFlow Serving, you can deploy machine learning models in production environments.

TorchServe

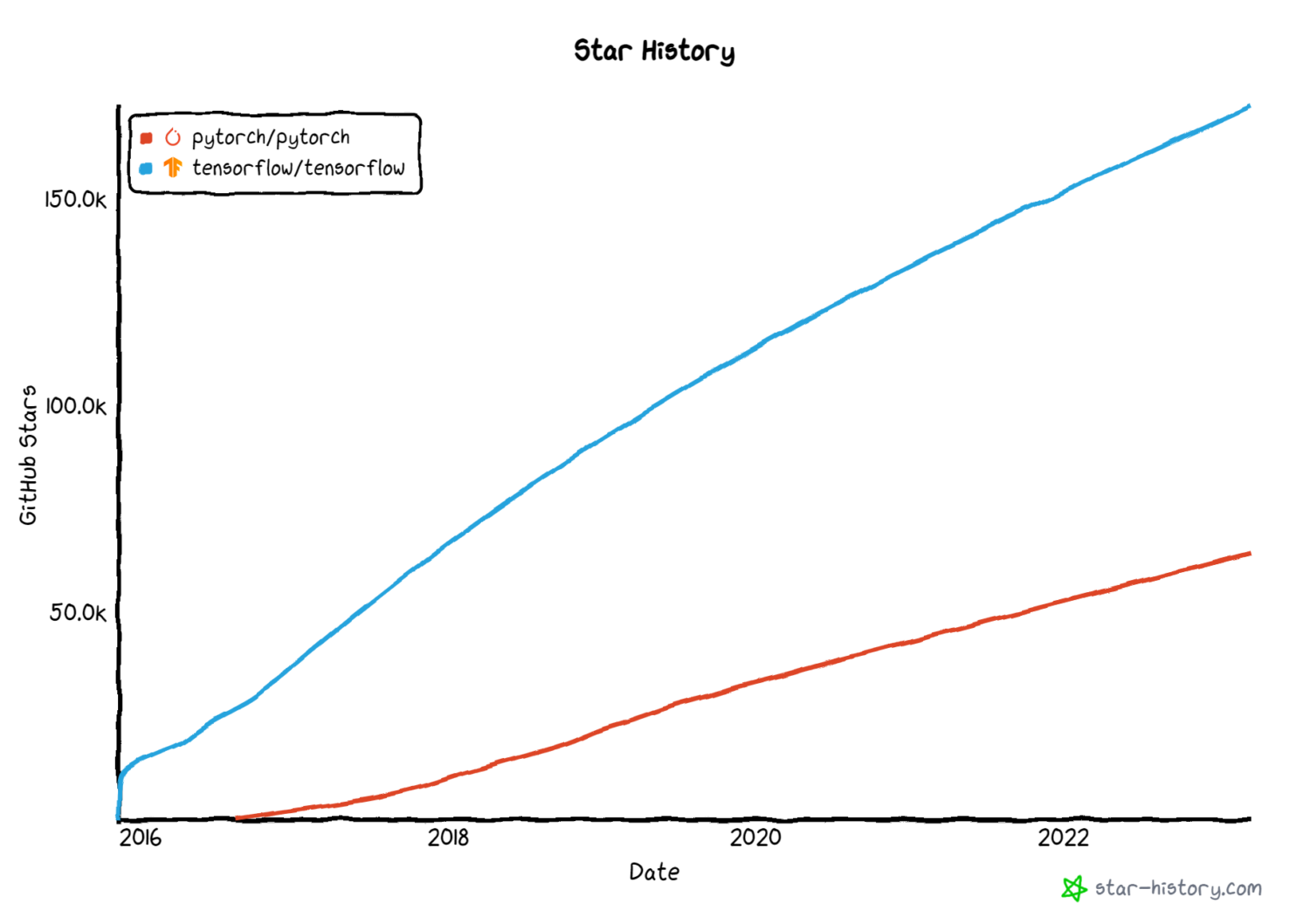

There’s a common opinion that PyTorch is popular in the research community while TensorFlow is popular in the industry. However, recently, both these frameworks have found widespread use.

Like TensorFlow Serving, PyTorch provides TorchServe, an easy-to-use framework that makes it easy to serve PyTorch models in production. In addition, you can also use TensorFlow Lite for deploying machine learning models on mobile and other edge devices.

Despite both frameworks providing deployment support, TensorFlow natively supports model deployment. It is, therefore, the preferred choice in production environments.

#3. Features for Model Interpretability

You can build deep learning models for applications used in domains like healthcare and finance. But if the models are black boxes that output a given label or prediction, there’s a challenge of interpreting the model’s predictions.

This led to interpretable machine learning (or explainable ML) to come up with approaches to explain the working of neural networks and other machine learning models.

Therefore, interpretability is super important to deep learning and to better understanding the working of neural networks. And we’ll see what features PyTorch and TensorFlow offer for the same.

PyTorch Captum

PyTorch Captum, the model interpretability library for PyTorch, provides several features for model interpretability.

These features include attribution methods like:

- Integrated Gradients

- LIME, SHAP

- DeepLIFT

- GradCAM and variants

- Layer attribution methods

TensorFlow Explain (tf-explain)

Tensorflow Explain (tf-explain) is a library that provides functionality for neural network interpretability, including:

- Integrated Gradients

- GradCAM

- SmoothGrad

- Vanilla Gradients and more.

So far, we’ve seen the features for interpretability. Let’s proceed to another important aspect – privacy.

#4. Support for Privacy-Preserving Machine Learning

The usefulness of machine learning models is dependent on access to real-world data. However, this comes with the downside that the privacy of the data is lost. Recently, there’ve been significant advancements around privacy-preserving machine learning techniques such as differential privacy and federated learning.

PyTorch Opacus

Differentially private model training ensures that individual records while still learning useful information about the dataset as a whole.

And PyTorch Opacus allows you to train models with differential privacy. To learn how to implement differentially private model training, check out the introduction to Opacus.

TensorFlow Federated

Federated learning removes the need for a centralized data collection and processing entity. In a federated setting, the data never leaves the owner or premise. Therefore, federated learning facilitates better data governance.

TensorFlow Federated provides functionality to train machine learning models on decentralized data.

#5. Ease of Learning

PyTorch is a Pythonic deep-learning framework. Coding comfortably in PyTorch requires intermediate Python proficiency, including a good grasp of object-oriented programming concepts such as inheritance.

On the other hand, with TensorFlow, you can use the Keras API. This high-level API abstracts away some of the low-level implementation details. As a result, if you’re just starting out with building deep learning models, you may find Keras easier to use.

PyTorch vs. TensorFlow: An Overview

So far, we’ve discussed the features of PyTorch and TensorFlow. Here’s a comprehensive comparison:

| Feature | PyTorch | TensorFlow |

|---|---|---|

| Datasets and pre-trained models in torchtext, touch audio, and torchvision | Library of Datasets and pretrained models | Datasets and pre-trained models in torchtext, torchaudio, and torchvision |

| Deployment | TorchServe for serving machine learning models | TensorFlow Serving and TensorFlow Lite for model deployments |

| Model Interpretability | PyTorch Captum | tf-explain |

| Privacy-Preserving Machine Learning | PyTorch Opacus for differentially private model training | TensorFlow Federated for federated machine learning |

| Ease of Learning | Requires intermediate proficiency in Python | Relatively easier to learn and use |

Learning Resources

Lastly, let’s wrap up our discussion by going over some helpful resources to learn PyTorch and TensorFlow. This is not an exhaustive list but a list of cherry-picked resources that’ll get you up to speed quickly with these frameworks.

#1. Deep Learning with PyTorch: A 60-Minute Blitz

The 60-minute blitz tutorial on the PyTorch official website is an excellent beginner-friendly resource to learn PyTorch.

This tutorial will help you get up and running with Pytorch fundamentals such as tensors and autographed, and build a basic image classification neural network with PyTorch.

#2. Deep Learning with PyTorch: Zero to GANs

Deep Learning with PyTorch: Zero to GANs by Jovian.ai is another comprehensive resource for learning deep learning with PyTorch. Over the course of about six weeks, you can learn:

- PyTorch basics: tensors and gradients

- Linear regression in PyTorch

- Building deep neural networks, ConvNets, and ResNets in PyTorch

- Building Generative Adversarial Networks (GANs)

#3. TensorFlow 2.0 Complete Course

If you’re looking to get the hang of TensorFlow, the TensorFlow 2.0 Complete Course on freeCodeCamp’s community channel will be helpful.

#4. TensorFlow – Python Deep Learning Neural Network API by DeepLizard

Another great TensorFlow course for beginners is from DeepLizard. In this beginner-friendly TensorFlow course, you’ll learn the basics of deep learning, including:

- Loading and preprocessing datasets

- Building vanilla neural networks

- Building convolutional neural networks (CNNs)

Conclusion

In summary, this article helped you get a high-level overview of PyTorch and TensorFlow. Choosing the optimal framework will depend on the project you’re working on. In addition, this would require you to factor in the support for deployment, explainability, and more.

Are you a Python programmer looking to learn these frameworks? If so, you can consider exploring one or more of the resources shared above.

And if you’re interested in NLP, check out this list of natural language processing courses to take. Happy learning!