Building AI models in production is not a once-off process. It is an iterative process where the dataset, models, and hyperparameters are continuously tweaked and improved to improve the models’ accuracy and speed.

In this iterative process, documenting information about datasets, models, and hyperparameters for future reference is important. That is where metadata comes in.

What is Metadata in ML?

Simply put, metadata is data about data. In the context of Machine learning, metadata is data generated at the different stages of the machine learning lifecycle. This includes data about artifacts, models, and datasets involved at each stage.

This article will review some of the best AI metadata-tracking platforms for your ML applications.

Let’s explore!

AimStack

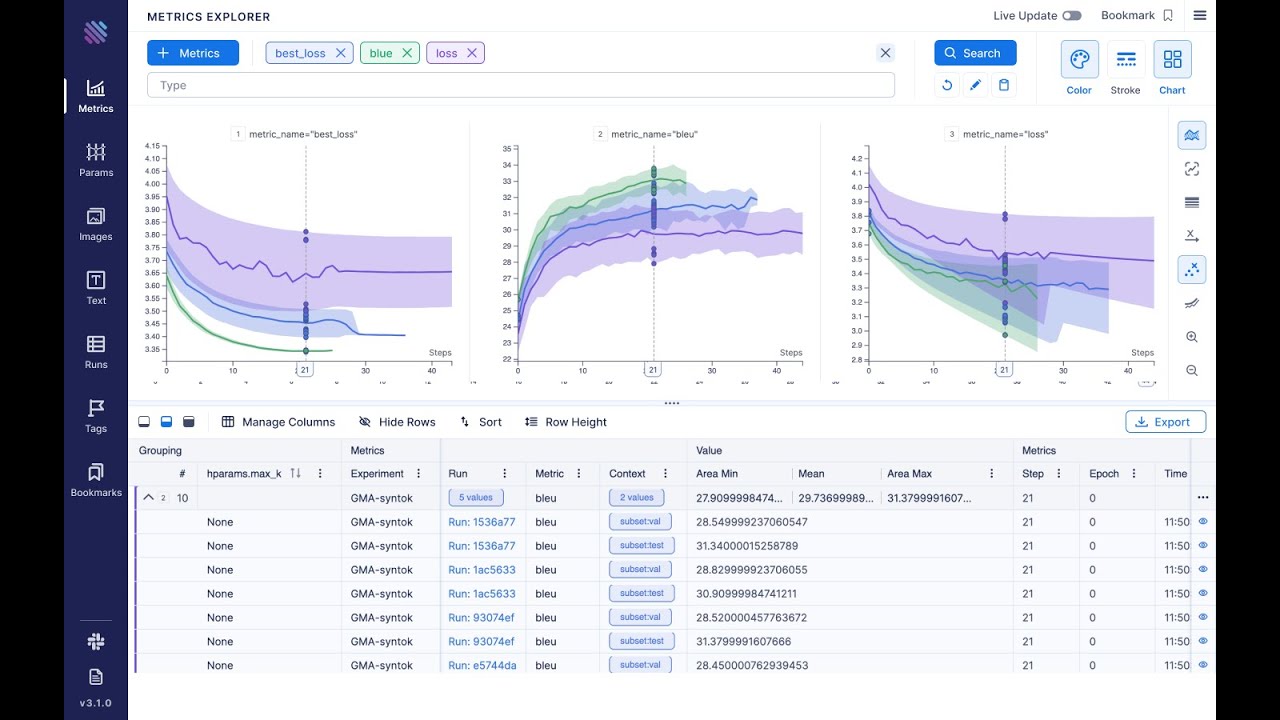

AimStack is an easy-to-use and open-source tracker for your ML metadata. Because it is open-source, you can self-host your AIM. It is implemented as a lightweight Python package that you can use to log your ML runs from your code.

In addition, it provides a UI that makes it easy to visualize your metadata. You can also make programmatic queries using the SDK. It integrates well with popular ML tools such as PyTorch, TensorFlow, and MLflow.

Neptune

Neptune provides a single platform to use to manage your metadata. The platform has plans ranging from free individual to paid team and enterprise plans.

With Neptune, you can log metadata and view it in an interactive online dashboard. You can generate logs about the dataset used, the hyperparameters, and basically anything else during your machine-learning workflow. This allows you to track and monitor experiments.

Neptune integrates with popular ML tools such as Hugging Face, Sci-Kit Learn, and Keras.

Domino Data Lab

Domino is a popular enterprise MLOps platform used by teams to continually develop, deploy, monitor, and manage Machine Learning models.

As a platform, Domino is made up of several components. The major component used in metadata management is the system of record component. With this feature, Domino continually checks and tracks changes to code, tools, and data through version control. You can also log metrics, artifacts, and any other information.

Viso

Viso is an all-in-one, no-code platform for building computer vision applications. With Viso, you can automate manual work and build scalable models. It includes features you will need in the development lifecycle of your machine learning applications.

These include tools for data collection, annotating data, training, developing, and deploying, among others. Using the Viso deployment manager, you can monitor your models to identify issues.

You can also monitor events and metrics in the cloud and present them in interactive dashboards for the team to view and collaborate.

Studio by Iterative AI

Studio is a platform for data and model management created by Iterative AI. It offers different plans, including a free plan for individuals.

Studio has a model registry for keeping track of your machine-learning models using Git repositories. The platform also includes tracking for experiments, visualization, and collaboration.

It also helps you automate your machine-learning workflows and build using a no-code UI. It integrates with your popular Git providers, such as GitLab, GitHub, and BitBucket.

Seldon

Seldon simplifies serving and managing machine learning models at scale. It works well with tools such as Tensorflow, SciKit-Learn, and Hugging Face.

Among other ways, Seldon helps you improve efficiency by monitoring and managing your models. It enables you to track your model lineage, use version control to keep track of your data and models, and create logs for any other metadata.

Valohai

Valohai makes it easy for developers to log AI metadata to do with experiments, datasets, and models. This enables companies to build a knowledge base for their machine-learning operations.

It integrates with tools such as Snowflake, BigQuery, and RedShift. It is mainly meant for enterprise users. Usage options include using it as a SaaS or on your cloud account or physical infrastructure.

Arize

Arize is an MLOps platform that allows Machine Learning Engineers to detect issues with their models, trace the causes of the issues, resolve them and improve their models.

It functions as a central hub for monitoring model health. With Arize, you can monitor things such as model drift, performance, and data quality. It also monitors your model schema and features and compares changes across different versions.

Arize makes it easy to perform A/B comparisons after tests. You can query metrics using an SQL-like language. You can also access it via the GraphQL programmatic API.

Final Words

In this article, we went through metadata and why it is important in Artificial Intelligence development.

We also covered the most common and best tools for managing metadata produced in your Machine Learning workflows.

Next, check out AI platforms to build your modern application.