Open-source database software provides cost-effective, flexible, and community-driven solutions for businesses of all sizes, eliminating vendor lock-in and proprietary license constraints.

Open-source database software offers transparency, enabling users to customize and optimize the software for their project needs, and their growing popularity is reflected in market trends. Valued at approximately $10 billion in 2023, the open-source database software market is projected to reach $35.83 billion by 2030, driven by a CAGR of 20%.

With so many options available, choosing the right open-source database can be challenging. To simplify your decision, I’ve researched and compiled a list of the best open-source database software for personal and professional projects.

- 1. MySQL

- 2. PostgreSQL

- 3. MariaDB

- 4. CockroachDB

- 5. ClickHouse

- 6. Neo4j

- 7. MongoDB

- 8. RethinkDB

- 9. SQLite

- 10. Apache Cassandra

- 11. TimescaleDB

- 12. Apache CouchDB

- 13. FerretDB

- Show less

You can trust Geekflare

At Geekflare, trust and transparency are paramount. Our team of experts, with over 185 years of combined experience in business and technology, tests and reviews software, ensuring our ratings and awards are unbiased and reliable. Learn how we test.

1. MySQL

MySQL is a widely used open-source database system developed by Oracle. It’s a go-to choice for building web apps, managing data, and handling fast and reliable database needs. It uses SQL to store and manage data effectively.

It’s popular because it’s everywhere—almost every developer learns it first, and most content management systems (CMS) and frameworks support it. It works well for most use cases, so there’s no mystery to its success.

MySQL is available under two licenses: an open-source GPL version 2 license and a commercial license from Oracle for organizations needing to distribute it with proprietary applications.

Initially designed for online transaction processing (OLTP), MySQL is still great for handling transactional data. Today, Oracle’s MySQL HeatWave cloud service has expanded its capabilities to include analytics and machine learning.

MySQL became famous as a key part of the LAMP stack (Linux, Apache, MySQL, PHP/Perl/Python) that powered the first wave of web development. It’s still the backbone of many websites today.

MySQL Key Features

- Cross-Platform Support: MySQL works on Windows, Linux, and macOS, making it flexible for different systems.

- Security: Provides strong features like authentication, encryption, and access control to keep data safe.

- Editions: Comes in a free Community Edition and paid versions with extra features and support.

- Cloud Compatibility: Works well with major cloud platforms like AWS, Azure, and Google Cloud, including managed services like Amazon RDS.

When to use MySQL

Use MySQL when you need a reliable, fast, and easy-to-use database for web applications or small to medium-sized projects. It’s perfect for websites, e-commerce platforms, or blogs. MySQL handles extensive data and works well with popular programming languages like PHP, Python, and JavaScript.

When not to use MySQL

Avoid using MySQL if you need advanced analytics, real-time processing, or support for unstructured data like documents or graphs. It’s not ideal for very large-scale, highly distributed systems.

2. PostgreSQL

PostgreSQL open-source object-relational database software (ORDBMS) has been around since 1997 and is the top choice in communities like Ruby, Python, Go, etc.

It works on Linux, macOS, Windows, and BSD systems. PostgreSQL ensures reliable data handling and supports features like foreign keys, joins, views, triggers, and stored procedures. It handles complex data, geospatial tasks, or high transaction workloads.

PostgreSQL is secure and protects your data with features like role-based access control and encryption. This makes it a reliable choice for finance, healthcare, web development, and data analytics industries.

It offers advanced capabilities, such as support for JSON/JSONB for handling unstructured data, full-text search, and geospatial data processing through PostGIS.

A global community of developers actively improves PostgreSQL. The latest version, PostgreSQL 17.2, was released on November 21, 2024.

PostgreSQL Key Features

- Diverse Data Types: Supports arrays, ranges, UUIDs, and geolocation data.

- Document Storage: Offers native storage for JSON, XML, and key-value pairs using Hstore.

- Replication: Provides both synchronous and asynchronous replication methods.

- Programmability: Allows scripting in PL/pgSQL, Perl, and Python.

- Full-Text Search: Includes built-in capabilities for full-text search.

When to use PostgreSQL

PostgreSQL is best used when your data is relational and you need a robust, reliable database with strong support for complex queries, transactions, and data integrity.

When not to use PostgreSQL

PostgreSQL is not a good choice if your data isn’t relational or has unique system requirements. For example, in analytics, where new reports are often created from existing data, the system handles a lot of reads and struggles with strict schemas. While PostgreSQL does support document storage, it doesn’t perform well with very large datasets.

Check out this SQL & PostgreSQL for Beginners course if you are interested in learning more.

3. MariaDB

MariaDB is an open-source relational database management system (RDBMS) developed as a substitute for MySQL by its original creator, Michael “Monty” Widenius. This software is trusted by big brands like Wikipedia, WordPress.com, and Google.

It is a fast, scalable, and reliable solution for transforming data into structured information. Perfect for banking systems, websites, and beyond, MariaDB efficiently handles diverse applications. As a drop-in replacement for MySQL, it delivers exceptional flexibility through its advanced storage engines, plugins, and tools.

MariaDB offers a variety of storage engines tailored to specific needs. The Spider storage engine facilitates distributed transactions by enabling the creation of distributed databases. The ColumnStore engine provides a columnar storage solution suitable for analytical workloads. Aria enhances performance for complex queries, particularly in read-heavy workloads.

MariaDB uses SQL for accessing data and now includes advanced features like GIS and JSON support, making it suitable for modern applications.

MariaDB Key Features

- Fully Open Source: MariaDB Server is released under the GPLv2 license, guaranteeing its availability as free and open-source software.

- Improved Replication: Features like multi-source replication make data copying more flexible.

- JSON Functions: Includes several functions to work efficiently with JSON data.

- Compatibility with WordPress: If you’re running a WordPress site, MariaDB is a reliable alternative to MySQL, managing your website’s data effectively.

When to use MariaDB

Use MariaDB if you’re looking for a reliable alternative to MySQL and don’t plan to switch back. It’s a great choice to use its modern features, like new storage engines, to enhance your project’s relational data model.

When not to use MariaDB

Avoid using MariaDB if you need advanced analytics, extremely high transaction workloads, or compatibility with proprietary tools like SQL Server.

Check out how to install and configure MariaDB

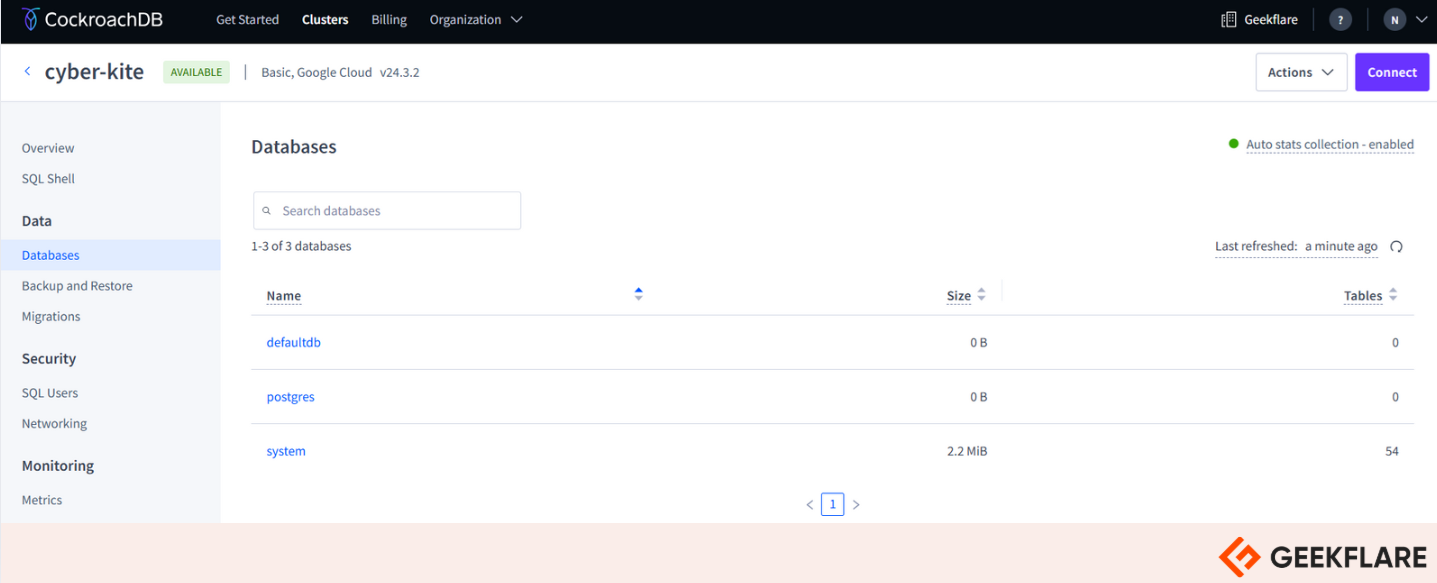

4. CockroachDB

CockroachDB is a distributed SQL database designed for high availability, scalability, and strong consistency. Its source code is freely available.

CockroachDB is cloud-native and can be deployed across various cloud providers or on-premises infrastructures. You can pin data to specific regions to reduce user latency and meet compliance requirements, ensuring data stays where it should be. It is built to automatically replicate, rebalance, and recover with minimal configuration and operational overhead.

It supports the PostgreSQL wire protocol, so you can use any available PostgreSQL client drivers to connect from various languages.

CockroachDB Key Features

- Effortless Scaling: As your workload grows, CockroachDB allows you to add more nodes to your cluster, automatically distributing data and balancing the load.

- Standard SQL Support with ACID Transactions: CockroachDB uses standard SQL and supports ACID-compliant transactions, ensuring your data remains accurate and consistent, even during scaling.

- High Availability: CockroachDB can handle failures of nodes, availability zones, or entire regions without downtime. It allows for maintenance tasks like schema changes and rolling upgrades without disrupting service.

When to use CockroachDB

Use CockroachDB when you need a super reliable database that is easy to scale and handles lots of data across different locations. It’s perfect for apps that require high availability and fast performance, even during failures.

When not to use CockroachDB

CockroachDB is an innovative solution, but it may not be ideal for every use case. You may encounter limitations and edge cases if your application relies heavily on advanced or non-standard SQL features. It’s unsuitable for scenarios requiring extremely low-latency, single-node performance, as its distributed architecture can introduce overhead.

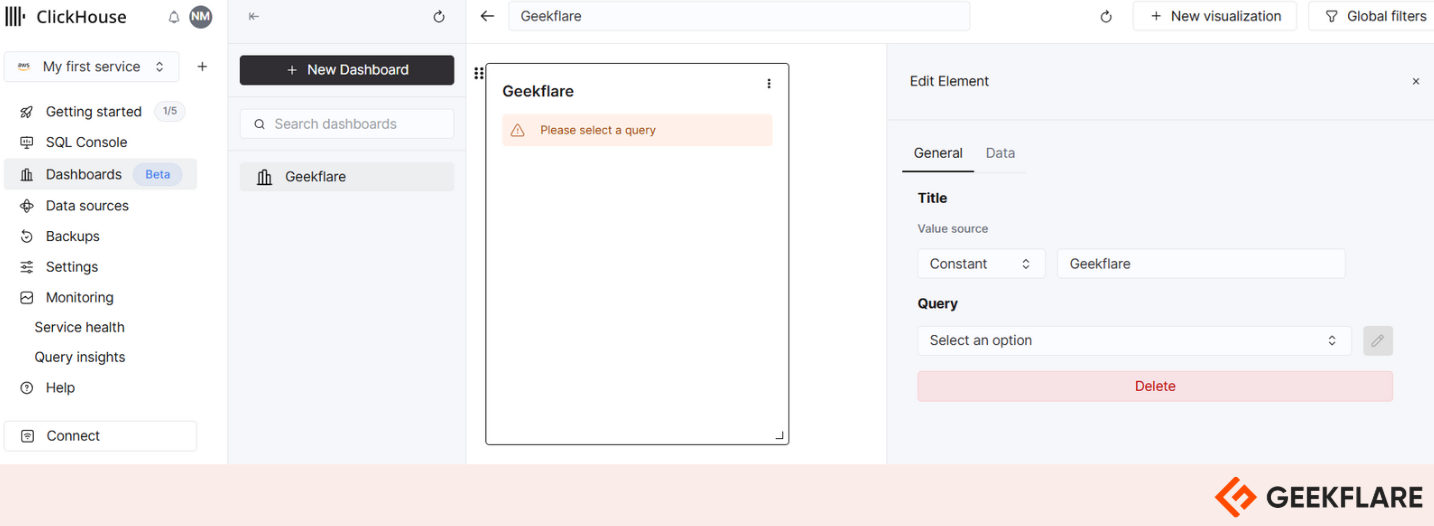

5. ClickHouse

ClickHouse is a fast, open-source database designed for analyzing large amounts of data (OLAP). It’s built for speed and efficiency, processing over 2 terabytes per second by using hardware to its full potential.

With multi-master asynchronous replication, ClickHouse can run across multiple data centers, ensuring no single point of failure. If a node or even a whole data center goes down, reads and writes remain unaffected.

ClickHouse organizes your data efficiently, making analyzing and building reports easy. It uses standard SQL, so you don’t need to learn new APIs. Its columnar storage keeps more data in memory, enabling quicker responses than row-based systems with similar hardware.

It’s cost-effective, too. ClickHouse works well with affordable hardware, including traditional disk drives, without sacrificing speed. It minimizes CPU and disk usage, optimizing every part of your infrastructure.

The system scales easily, running on a single server or thousands of nodes in a cluster. It’s a great choice for web analytics, finance, IoT, business intelligence, eCommerce, monitoring, etc.

ClickHouse Key Features

- Open Source: ClickHouse is licensed under Apache 2.0, making it transparent and open for community input.

- Easy Integration: Works well with tools like Kafka, Spark, Grafana, Hadoop, PostgreSQL, and MySQL.

- Columnar Storage: Stores data by columns, not rows, for faster queries and better compression.

- Scalable: Handles huge datasets, from terabytes to petabytes, and supports vertical and horizontal scaling.

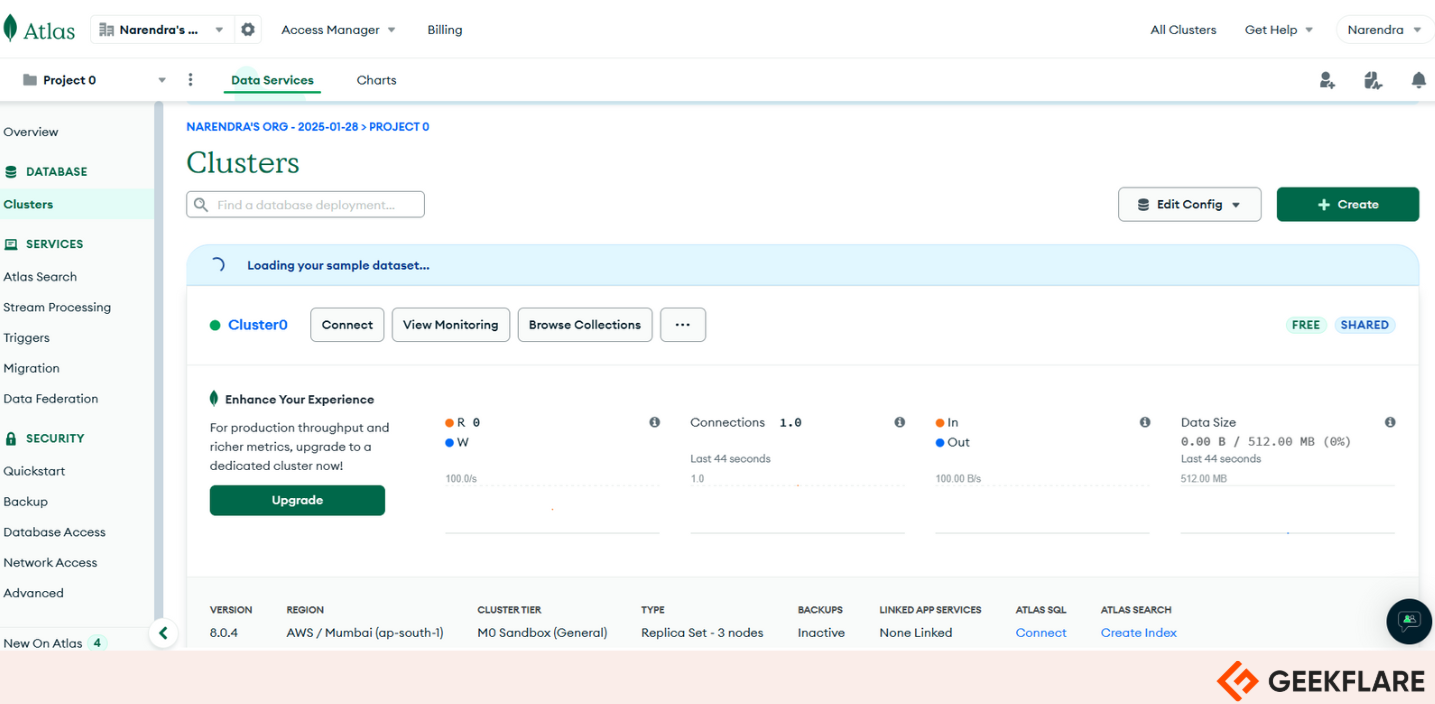

6. MongoDB

MongoDB Community Edition is a free, open-source database that you can manage yourself. It’s a NoSQL database that is flexible and scalable and works well for websites, mobile apps, and big data projects.

Instead of using tables like traditional databases, MongoDB stores data as documents, similar to JSON files. All related data, like a user’s contact details and access levels, are grouped in one place. When you retrieve a user’s data, everything connected to it comes along—no need for complex joins.

MongoDB is versatile and can handle many types of applications. With the Community Edition, you can host it on your computer or in the cloud. If you want to try a managed option, you can use MongoDB Atlas for free, which supports features like full-text and vector search locally and in the cloud.

The Community Edition includes essential security features like encryption, authentication, role-based access, and secure connections. However, setting up and managing these security settings takes time and effort.

MongoDB Key Features

- Flexible Schema: Works great for unique or unpredictable data setups.

- Simple Clustering: Setting up sharding and clustering is easy—configure it once you’re done.

- Easy Scaling: Adding or removing nodes in a cluster is super straightforward.

- Transactional Locks: Now supports distributed locks for better transaction handling.

- Fast Writes: Handles high-speed data writes, making it perfect for analytics or caching.

When to use MongoDB

MongoDB is a flexible database that bridges the gap between structured SQL and unstructured NoSQL. It’s great for quickly building prototypes because it doesn’t require a strict schema. When scaling is needed, MongoDB shines without the high costs of cloud SQL services.

When not to use MongoDB

MongoDB’s flexible schema can cause problems if not managed well. Issues like mismatched data, missing values, or unnecessary fields can quickly occur. Since MongoDB doesn’t enforce strict data rules, your application must handle data integrity, which can be challenging without proper planning.

7. RethinkDB

RethinkDB is an open-source JSON database explicitly designed for real-time web apps. Unlike traditional databases, it automatically pushes updated query results to applications, so developers don’t have to keep checking for changes. This unique feature makes building scalable real-time applications much easier and faster.

Its server is built in C++ and works on 32-bit and 64-bit Linux systems, as well as macOS 10.7 and later. Its client drivers work on any platform supported by their programming language. Both the server and client libraries are available under the Apache License v2.0.

RethinkDB needs at least 2GB of RAM to run well, but it’s flexible and can handle large on-disk data even on low-memory systems, including Amazon EC2 instances, thanks to its custom caching engine.

RethinkDB Key Features

- Query Language: An easy-to-use query system similar to JavaScript that is perfect for complex searches.

- JSON Storage: Data is stored in JSON, which is great for modern apps.

- Integration-Friendly: Compatible with popular frameworks and languages like Node.js, Python, Ruby, and Java.

When to use RethinkDB

RethinkDB is a good option if your app needs real-time updates to data. Many modern apps need to push data to users instantly, and RethinkDB is designed for that.

When not to use RethinkDB

RethinkDB may not be the best choice if you need complete ACID transactions or strict schema enforcement; in such cases, relational databases like MySQL or PostgreSQL are better. Tools like Hadoop or column-based databases like Vertica are more suitable for deep computational analytics.

8. SQLite

SQLite is a lightweight, self-contained SQL database that doesn’t need a server or any setup. It’s free to use for anything—personal or commercial—and is one of the most widely used databases in the world, powering countless applications.

SQLite stores the entire database (tables, indexes, triggers, views) in a single file. It works directly with disk files, skipping the need for a server. The database file format is cross-platform, so it can easily move between different systems (e.g., 32-bit and 64-bit).

SQLite is ideal for embedded systems, applications, and scenarios where simplicity and portability are more important than heavy-duty features. It’s often considered a replacement for basic file operations like fopen().

SQLite Key Features

- Compact Size: Library size is less than 750 KiB, making it lightweight.

- Zero-Configuration: Requires no installation, setup, or administration.

- Single-File Database: Stores the entire database in a single disk file, simplifying file management.

When to use SQLite

SQLite is an excellent choice if your app is straightforward and you don’t need a complex database. It works well for small to medium CMSs and demo apps.

When not using SQLite

SQLite is great but lacks some features in SQL databases, like clustering, stored procedures, and scripting. It also doesn’t have a client for connecting, querying, or exploring the database. As your app grows, SQLite’s performance can slow down.

9. Apache Cassandra

Apache Cassandra is a free, open-source NoSQL database trusted by thousands of companies to manage massive amounts of data reliably and quickly. It scales easily and stays fast, even on basic hardware or in the cloud, making it great for critical applications.

Its design ensures no single point of failure, so it keeps running smoothly even if a whole data center goes down, with no data loss. It works in the cloud, on-premises, or across both.

When you need to scale up by adding nodes or data centers, Cassandra moves data between nodes quickly. With Zero Copy Streaming, this process is up to 5x faster, making it ideal for cloud and Kubernetes setups.

Apache Cassandra Key Features

- Fault Tolerant: Cassandra replicates data across multiple data centers, ensuring low latency for users and reliable performance even during regional outages. If a node fails, it can be replaced without any downtime.

- Security and Monitoring: Cassandra’s audit logging tracks all database activity with minimal impact on performance. The

fqltoollets you capture and replay workloads to analyze how the system performs. - Distributed: Cassandra is built to prevent data loss, even if a whole data center fails. There’s no single point of failure or network bottleneck, and every node in the cluster works the same way.

When to use Apache Cassandra

Cassandra is perfect for handling massive data, like logs and analytics. It’s designed for high availability and can manage huge workloads without downtime. Big names like Apple and Netflix use it for its scalability and reliability.

When not to use Apache Cassandra

Cassandra isn’t the best choice if you need complex aggregations or advanced query features. It sacrifices data consistency for high availability, so it’s unsuitable for systems requiring precise, real-time read accuracy.

10. Apache CouchDB

Apache CouchDB is a free, open-source NoSQL database created by the Apache Software Foundation. It is simple to use, scalable, and excellent at handling data replication. It stores data in JSON format using a document-based model.

It is a great choice for a single-node database, working well with any application server. Most users start with a single-node setup and can later upgrade to a cluster for more demanding needs.

CouchDB can also run as a cluster, combining multiple servers or virtual machines into a single database server. This setup increases capacity and ensures high availability without requiring changes to APIs.

Apache CouchDB makes it easy to access your data wherever you need it. It uses the Couch Replication Protocol, which works across all systems, from global server clusters to mobile phones and web browsers.

You can store your data securely on your own servers or with major cloud providers. CouchDB is a great choice for web and native apps because it uses JSON and supports storing binary files.

With the Couch Replication Protocol, your data moves smoothly between servers, mobile devices, and browsers. This supports offline functionality while staying reliable and fast. CouchDB also offers an easy-to-use query language and optional MapReduce for powerful and efficient data access.

Apache CouchDB Key Features

- Offline Data Sync: Syncs data even when offline.

- Mobile and Web Versions: Offers tools like PouchDB and CouchDB Lite for mobile and web use.

- Easy Clustering: Supports clustering with built-in data redundancy.

- HTTP and JSON: Works with HTTP protocol and uses JSON for storing data.

When to use Apache CouchDB

Apache CouchDB is designed to work even when offline, making it a great choice for situations like mobile apps. In such cases, data created on the user’s device is stored locally. As devices aren’t always online, the database efficiently syncs updates when a connection is available, resolving conflicts effortlessly using the powerful Couch Replication Protocol.

When not to use Apache CouchDB

Apache CouchDB is not a good choice if you try to use it for tasks it wasn’t designed for. It takes up much more storage than other databases because it keeps extra copies of data and tracks conflicts. This also makes its write speeds very slow. CouchDB isn’t ideal for managing schemas, as it doesn’t handle schema changes well.

Check out 45+ Best Database Software You Should Know

11. FerretDB

FerretDB is an open-source document database that works like MongoDB but stores data in PostgreSQL or other databases. It lets you use MongoDB commands while keeping your data in PostgreSQL.

FerretDB is a document database that uses commands, drivers, and tools similar to MongoDB for storing data. It’s a low-cost option for creating scalable, enterprise-grade databases. It stores data as structured objects with rows and columns, perfect for massive, complex searches and transactions.

Instead of tables with rows and columns like traditional databases, FerretDB stores data as JSON documents, making it great for modern apps and flexible integrations.

FerretDB is fast, efficient, and highly customizable, fitting the needs of both beginners and experienced users. It’s a cost-effective way to handle large and complex data with ease.

FerretDB Key Features

- Open Source Licensing: FerretDB uses an open-source license, so you can use and modify it without being tied to a specific vendor.

- Flexible Deployment: FerretDB works on both on-premises servers and in the cloud, adapting to different infrastructure setups.

- Multiple Backend Support: FerretDB supports both PostgreSQL and SQLite, giving you options for your database engine.

12. TimescaleDB

TimescaleDB is a free, open-source database built on PostgreSQL, designed to handle time-series data efficiently. It’s great for storing and analyzing data from IoT devices, financial systems, DevOps monitoring, and more.

Unlike regular databases, TimescaleDB focuses on time-based data, making it ideal for tasks like tracking temperature readings from a greenhouse sensor every second. It’s perfect for managing trends and insights over time.

With features like automatic data partitioning (hypertables), compression, and continuous aggregation, TimescaleDB handles large-scale data efficiently without compromising query performance. It supports standard SQL, which means you can use familiar tools and queries while benefiting from time-series optimizations.

TimescaleDB Key Features

- Built on PostgreSQL: It’s built on PostgreSQL, a top open-source relational database. If you already use PostgreSQL, Timescale works well with it.

- Hypertables: Automatically partitions large time-series data into smaller, more manageable chunks while maintaining a seamless query experience.

- Flexible Schema: Supports both relational and schemaless setups, depending on your needs.

When to use TimescaleDB

Use TimescaleDB to manage and analyze time-series data, like IoT sensors, financial trends, or DevOps metrics, where efficient storage, fast queries, and time-based insights are essential.

When not to use TimescaleDB

Avoid TimescaleDB for non-time-series data, small datasets, or use cases requiring advanced NoSQL features.

More Open Source and Free Database Software Options

I’ve listed more database options for you to choose from, depending on your project needs.

| Open Source Database | Description |

|---|---|

| 13. Redis | An open-source, in-memory database that stores data as key-value pairs. |

| 14. Firebird | A lightweight, open-source SQL relational database offering cross-platform compatibility. |

| 15. OrientDB | A multi-model database supporting graph, document, object, and key-value models with ACID compliance and high scalability. |

| 16. Apache Druid | A real-time analytics database designed for fast aggregation of high-dimensional data with interactive query capabilities. |

| 17. Neo4j | A graph database for managing and analyzing connected data. |

| 18. Prometheus | A time-series database optimized for monitoring and alerting with a flexible query language. |

| 19. Dgraph | A distributed scalable graph database designed for complex relationship modeling and high-performance queries. |

| 20. H2 Database | A distributed key-value store ensures consistent and reliable data storage for critical system configurations. |

| 21. SurrealDB | A cloud-native, distributed database offering multi-model (graph, relational, document) support with a simplified query approach. |

| 22. Berkeley DB | An embedded, high-throughput database library supporting key-value data management with ACID transactions. |

| 23. BigchainDB | A blockchain-inspired distributed database designed for storing large amounts of immutable data. |

| 24. TiDB | A distributed SQL database that scales horizontally and supports MySQL compatibility with strong consistency. |

| 25. Etcd | A distributed key-value store ensuring consistent and reliable data storage for critical system configurations. |

| 26. RocksDB | A high-performance embedded key-value store optimized for flash storage and write-heavy workloads. |