Spotting Deepfakes is not a cakewalk; it’s getting more complex by the minute.

Knowing how to spot deepfakes in 2025 is the need of the hour, considering how powerful deepfakes can be as manipulation tools. Deepfakes using a combination of deep learning technology and AI can create realistic manipulated audio and video content, further enforcing how technology has always been a dual-edged sword. There are good things, and then there are worse things you can do with them.

Considering this, let us get to what deepfakes are and how to spot deepfakes in 2025, shall we?

What Are Deepfakes?

Put simply, they are fake media made with desktop or smartphone applications. These applications use specific algorithms to replace parts from the original and swap them with others, like this deepfake video of Obama.

See how ‘real’ it feels.

That’s the agenda. Fool or entertain people with these synthetic media.

A while ago, it was very tough to create such convincing videos, but now you only need a powerful graphic card, good AI face swap tools and a few days to get it done.

How to Spot Deepfakes?

Not all deepfakes are created equal. And therefore, not every technique works everywhere. While some have tell-tale signs of being machine-made, others need careful observation or even other AI tools to differentiate.

Still, this is getting simpler by the day, with the algorithms becoming efficient on low-end hardware and powerful computing making inroads.

This makes it important for us to be aware of common deep fake scams, and be vigilant to discern them from the truth.

Skin Tone

This is the first thing to take note of. The ‘worked’ portion (usually the face) will have slight differences in skin color, causing a mismatch with the rest of the visible body parts.

The left one in the above image is from a fake video of the Ukrainian president, Mr. Volodymyr Zelenskyy, asking his forces and people to surrender to Russia.

But no one was fooled by this mediocre copy-paste job, and it was called out instantly as a deepfake.

Expressions

This is the major giveaway, especially when amateurs masquerade as deepfakes experts. Their subpar creations often suffer from unnatural lip movement without human pauses helping to identify them as fake. Look at this deepfake video of Volodymyr Zelensky for instance.

However, some algorithms do have provisions for breathing pauses. Still, you can tell the identical stoppages and head movements that follow similar patterns, generally too mechanic to ignore.

Likewise, blinking is another area that reveals deepfakes as such. This also goes through cycles (as written in their code), which isn’t as human-like if you observe alongside a real video of the same person.

Another thing you can use to spot deepfakes is the eyeball movement. The AI, a machine lacking emotions and distractions, often appear more focused than an average human while talking.

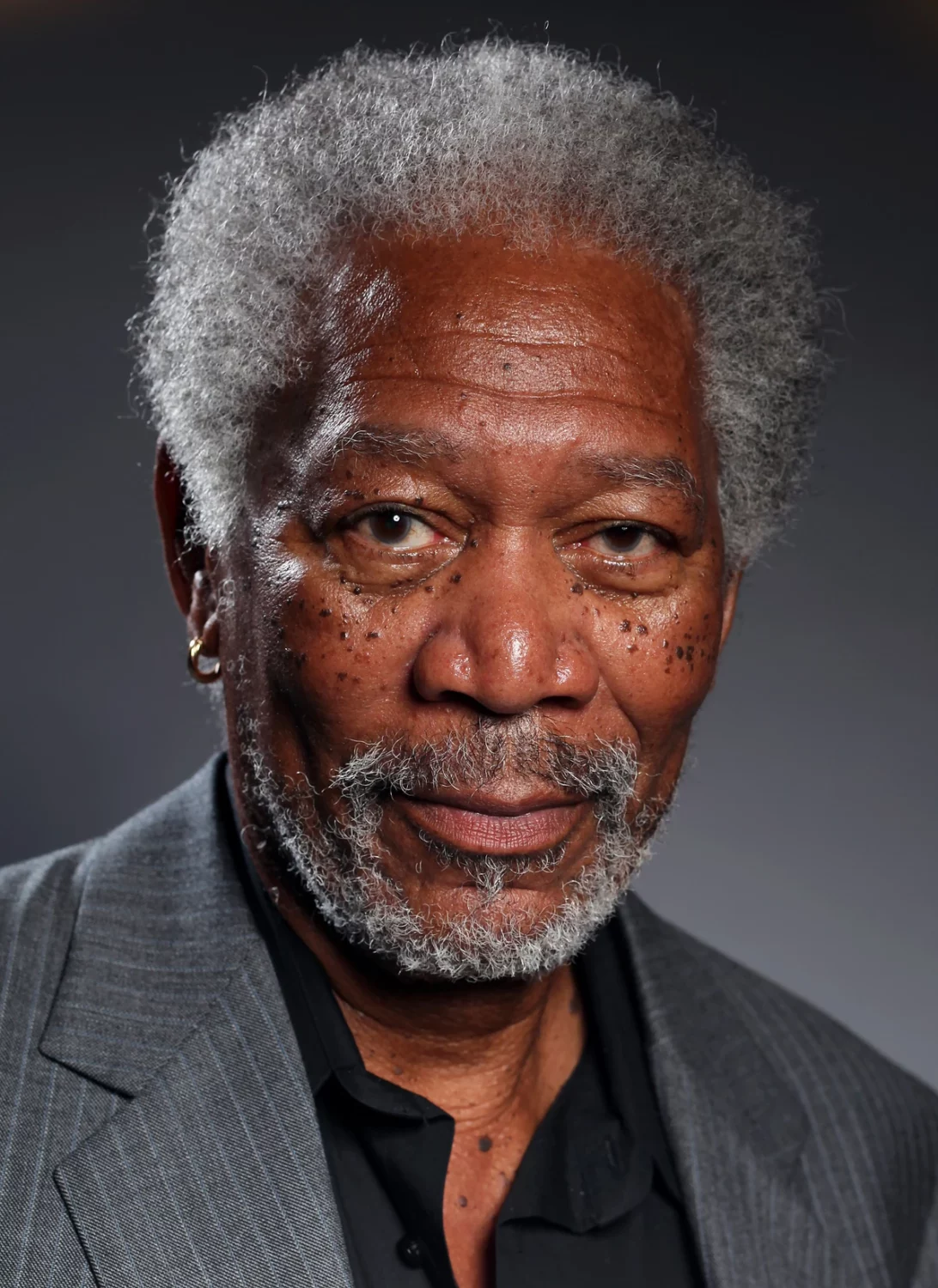

Conclusively, deepfakes are easier to spot unless made with (almost) perfection, like this deepfake video of Morgan Freeman.

Patches

So, how to tell that that isn’t the real Morgan Freeman?

The best thing about the preceding video is the high quality. You can switch to the highest resolution (4k available) to give yourself a chance to spot the artificial.

And the bigger the screen, the better. Alternatively, you can screenshot and zoom in to see if some computer work is behind the obvious.

Can you see the made-up skin? This is where algorithms fail, the small details, no matter how sophisticated.

The skin kind of looks patchy, and the facial (and head) hair reproduction isn’t natural and looks glued.

This will help you better analyze the following:

I can clearly pick out the synthetic skin, mostly visible just around the mustache, beard, and hairline.

The chin, eyebrows, face (the real one is slim), nose, etc.–compare that to the real one (on the right), and it becomes clear as a day.

To reiterate, having the real one side-by-side makes it easy to tell apart the fake.

Details

There is a lot that goes on when we talk. Everyone has their own styles, which lead the lips, tongue, chin, cheeks, etc., to move in a certain pattern unique to them.

Besides, deepfake tech has yet to master the inside-the-mouth-visuals while talking. For instance, you can’t point out individual lower (mandibular) teeth in the Obama deepfake.

All we see is a white strip at the bottom, and there are no signs of tongue movement at all.

You can watch any genuine Obama video and can observe the man is much more expressive with a lot of facial movement than this AI replica.

Besides, you can see the video itself isn’t very clear. It’s very low quality, compressed to hide the reality or due to computing limitations.

Transitions

One limitation in deepfake creation is their frame-by-frame generation. Every frame must be checked for perfect masking to keep the magic intact.

Because of this, most convincing deepfake videos are extremely limited in facial movements. They only show frontal faces with no side views because the side-to-front transitions can reveal the creative bottlenecks.

Check this still from a Tom Cruise deepfake:

If you slow down the video and keenly observe from 35-40 seconds, the side-to-frontal transitions have some blurred portions.

These are arguably the most difficult part to conceal and the best ones to uncover such AI creations.

So, these are a few things that can help you to spot deepfakes and limit misinformation. To summarize:

| 1. | Check for variations in skin tone. |

| 2. | Lookout for unnatural lip sync, robotic or no blinking, etc. |

| 3. | Zoom in to see if the skin texture, hair reproduction, etc. is true to life. |

| 4. | Compare the facial expressions and talking style with the real videos. |

| 5. | Check if high-quality versions are available. |

| 6. | Make a quick Google search to see if it’s fake. |

| 8. | Take note of overall facial dimensions and equate them with the real ones. |

| 9. | Slow down the video to pick up bad transitions. |

| 10. | Does the mouth interior look real and detailed? |

| 11. | Share and ask your friends and family for feedback in case of ambiguity. |

Deepfakes Aren’t Perfect!

While we only covered deepfake video, AI-created audio will suffer from similar restrictions. And though machines are getting better as we speak of it, they’ll always fall behind the living.

However, the learning isn’t complete without you actually getting your hands dirty. In that case, we have made this guide on what deepfakes are and how to create them.