If you are building an AI agent or RAG pipeline in 2026, web scraping is likely a core part of your infrastructure.

But there is a massive inefficiency in how most developers build these pipelines by scraping raw HTML.

Passing a raw webpage DOM into a Large Language Model (LLM) or a vector database is a mistake. Modern webpages are up to 90% noise with inline CSS, tracking scripts, massive navigation menus, and footer boilerplate. Feeding the garbage to an LLM not only increases the risk of hallucinations, but it also burns through your context windows and skyrockets your AI costs.

The secret to a highly efficient data pipeline isn’t just about how you scrape a site but what format you extract it in.

Let’s break down the optimal web scraping output formats for different use cases and how choosing the right one can cut your token costs by up to 85%.

1. Markdown for RAG and AI Agents

If you are feeding web data to an LLM like GPT-5, Claude, or Gemini, Markdown format is ideal.

Why?

Because LLMs are inherently trained on Markdown. It perfectly preserves the semantic structure of a page like ## Headings, * bullet points, and data tables without the token overhead of <div> and <span> tags.

However, even standard Markdown includes every link in the header and footer. To fix this, modern scraping APIs use semantic extraction to isolate the core content.

markdown-llm: This format runs the page through a cleaning before returning the data. It strips out the navbars, sidebars, and cookie banners, returning only the primary content as clean Markdown. (Saves ~75% on tokens compared to raw HTML).markdown: Use this if you are doing layout analysis or archiving a page and actually want the sidebar and footer text included, just without the HTML tags.

Use markdown-llm when you need to chunk documents for a vector database. The preserved ## Headings tell your chunking algorithm exactly where to split the text so context isn’t lost.

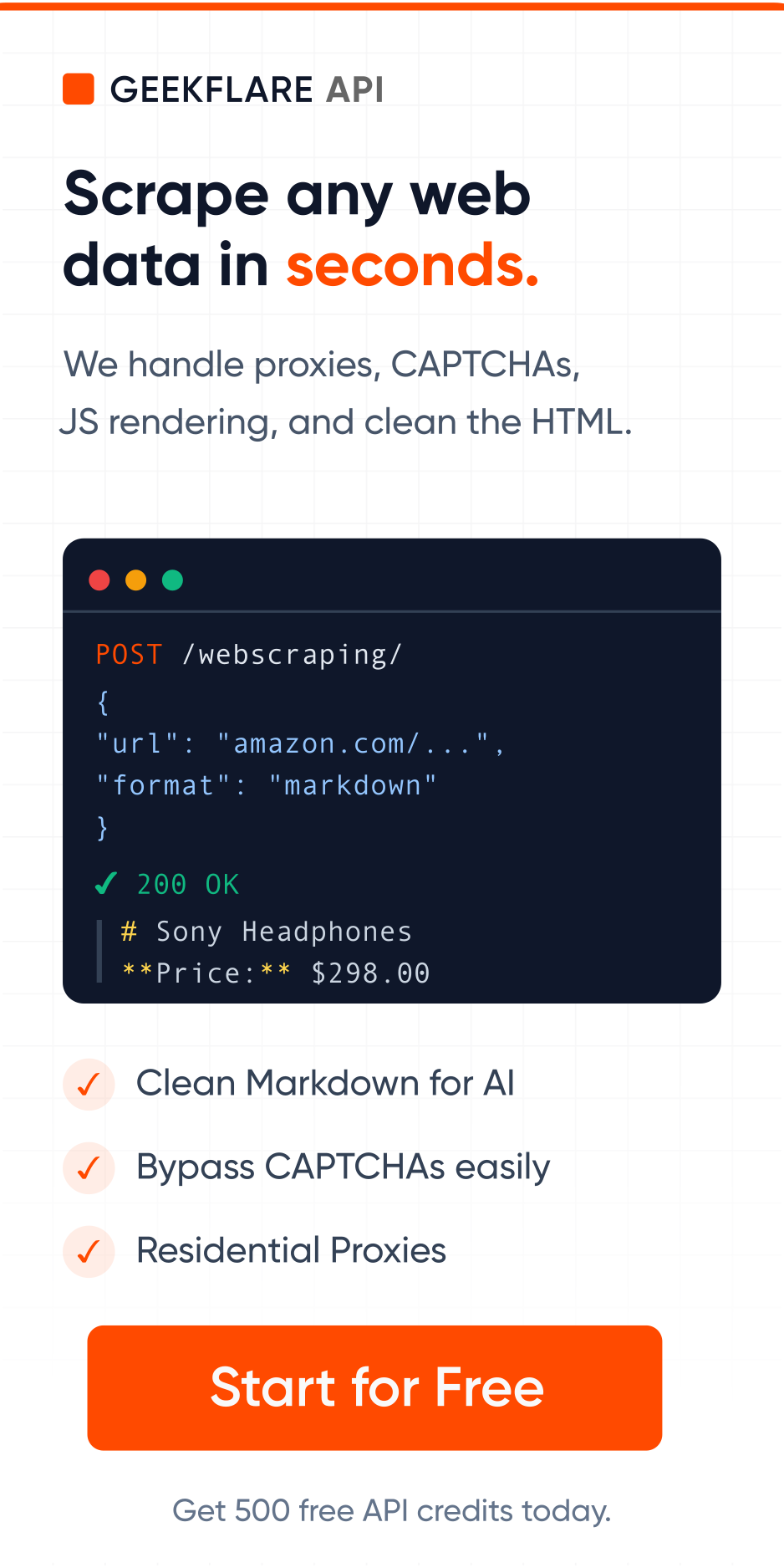

Request payload for LLM-optimized markdown in Geekflare scraping API:

{

"url": "https://example.com",

"format": "markdown"

}2. Text for Vector Embeddings & Batch Processing

Sometimes, you aren’t concerned about headings, bold text, or tables. If you are generating mathematical vector embeddings for semantic search, running traditional NLP sentiment analysis, or doing simple regex keyword matching, any formatting is just wasted space.

For data processing where every single byte counts, you need raw text.

text-llm: This extracts just the main body content of the webpage and strips away everything else. Just the raw words of the web page. (Saves ~85% on tokens).text: Returns the text of the entire page, including the navigation and footer.

Use text-llm when your priority is maximum token efficiency and you don’t need to retain the visual hierarchy of the data.

Request payload for just text in Geekflare scraping API:

{

"url": "https://example.com",

"format": "text-llm"

}3. HTML for DOM Parsing & Web Archiving

While AI developers are moving away from HTML, it still has its place. If you are using a traditional web scraper framework like Cheerio or BeautifulSoup, or if you are passing data to an AI model specifically fine-tuned to understand DOM structures, you need HTML.

html-llm: A upgrade over raw HTML. It keeps the DOM structure intact but strips out the<script>,<style>,<nav>, and<footer>tags. (Saves ~60% on tokens).html: The raw, unmodified DOM exactly as the browser renders it.

Use standard html when you need to republish a page exactly as it looks, or html-llm when your scraper relies on specific CSS selectors to extract data.

4. JSON for Structured Data

If you are a data analyst pushing web data into a Postgres database or a Pandas, you don’t want giant blocks of text. You want structured data.

json: Modern scraping APIs can automatically parse page metadata like OpenGraph tags, Schema.org markup, titles, authors, publish dates and return it alongside the content in a clean JSON object.

Use this when building news aggregators, tracking competitor pricing, or feeding data into no-code tools like Zapier.

Lite scraping to save money

Optimizing your output format saves you money on the LLM side. But you can also save money on the scraping side by understanding how your target website is built.

Many developers default to using heavy headless browsers like Puppeteer to scrape every site. But if you are scraping a low-security, text-heavy site like Wikipedia or a standard blog, spinning up a headless browser is overkill.

Using the Geekflare Web Scraping API, you can pair your optimized formats with Lite Scraping.

Clean up your data pipeline.

You don’t need to write custom scripts to clean raw HTML. Let Web Scraping API do that for you.

The Geekflare Web Scraping API natively supports all 7 formats (html, html-llm, markdown, markdown-llm, text, text-llm, json) out of the box.