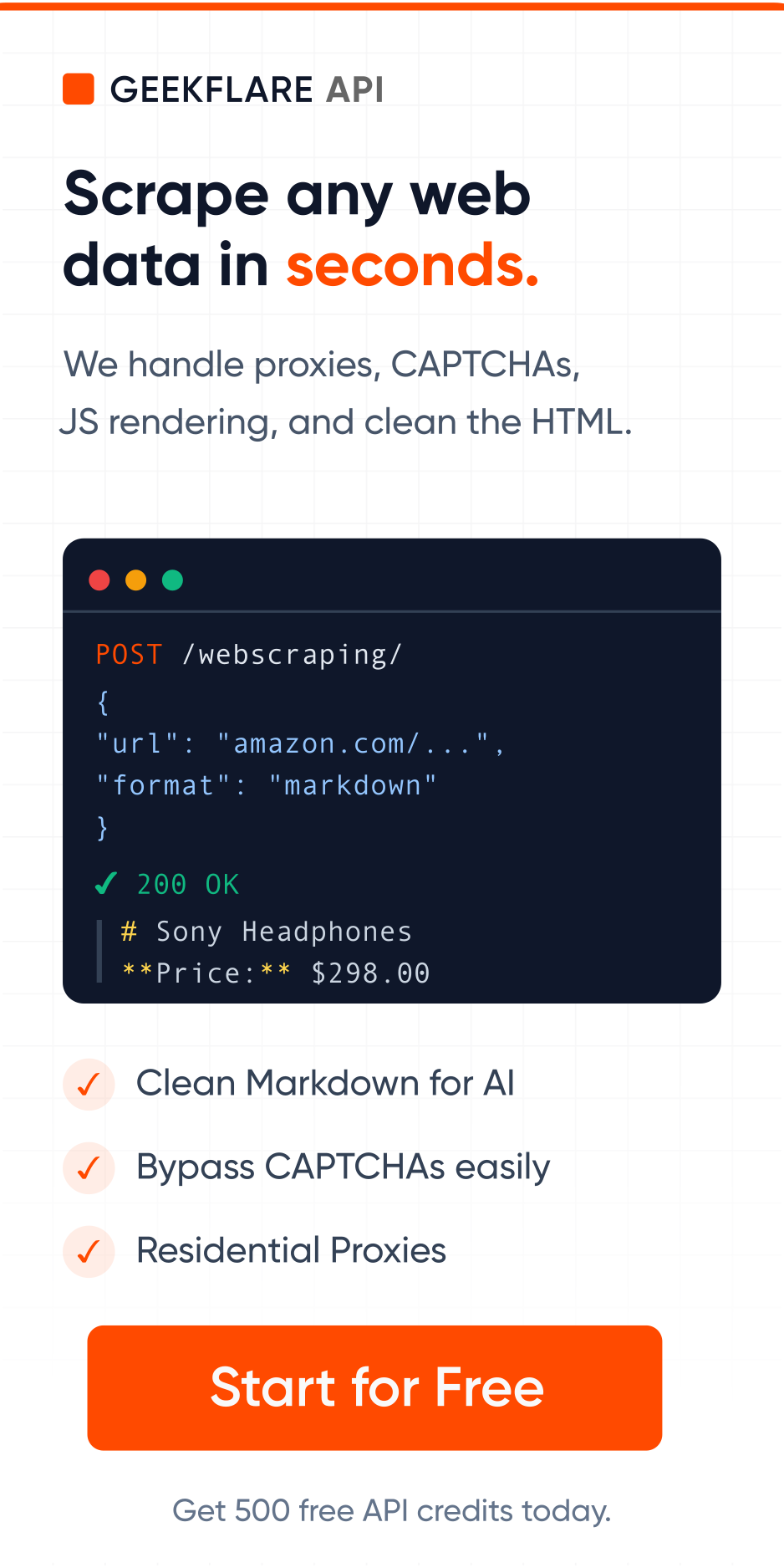

Raw web data is messy, and feeding it into your AI pipeline wastes tokens, slows responses, and inflates your OpenAI or Anthropic bill. Today we’re shipping three new output formats built for LLMs: markdown-llm, text-llm, and html-llm.

When you scrape a webpage and pass the result directly into an LLM, you’re not just sending the article. You’re sending everything, including navigation menus, cookie banners, footer links, ad scripts, social widgets, and boilerplate markup that the model has to wade through.

This bloat eats up context windows and introduces noise that impacts accuracy. For RAG pipelines and agentic workflows where dozens of pages might be processed in a single run, the costs and latency compound fast.

Introducing the -llm endpoints

Earlier this year we launched standard markdown extraction. Today we’re adding three new output formats in Web Scraping API that go further and clean the DOM behind the scenes so you don’t have to.

| markdown-llm | Clean markdown with all boilerplate stripped. Ideal for agents that reason over structured content. |

| text-llm | Pure prose content, maximally compressed. The most token-efficient option to save up to 85% vs. raw HTML. |

| html-llm | For pipelines that need HTML structure without the noise. |

All three -llm formats share the same pipeline: we parse the full DOM, identify and remove non-content regions, and then serialize the remaining content into the requested format.

Feed your AI agents only what they need. These new formats deliver clean content to save tokens.

This means now you have 7 output format options in our web scraping API. You can read about all the formats in the docs.

Who this is for

→ If you’re building RAG pipelines, you can now store and index LLM-optimized content from the start.

→ If you’re running AI agents that browse and extract from the web, the -llm formats reduce the per-page token cost significantly, which matters when your agent is hitting dozens of URLs per task.

→ If you’re already using the Geekflare Scraping API with standard markdown or HTML output, switching to the -llm variants is a one-line change to your format parameter.

Getting started

The new endpoints are available now on all existing plans. Update your format parameter to markdown-llm, text-llm, or html-llm when calling the scraping API. Full documentation with examples and supported selectors is available in the API reference.

You can also try these new formats in our API Playground.

This release is a direct result of feedback from developers building with our standard output formats. If there are other pipeline-specific formats or cleaning behaviors you’d find useful, please let us know.