Billions of data points sit on public websites, like product prices, customer reviews, job postings, news articles, and competitor announcements. If you run an e-commerce store and need to track the prices of 500 products across 10 competitor websites every morning, doing it by hand would take days.

A web scraper does the same job in minutes. Web scraping is the process of automatically collecting that unstructured data spread across hundreds of websites in various formats and organizing it into a structured format.

How web scraping works

Web scraping visits websites, reads the page content, and pulls out specific pieces of information you need and then saves that data in a structured format like a spreadsheet or database.

It works the same way as you would if you had just 5 sites to visit: open the site, locate the relevant information, and copy-paste it into a spreadsheet or any other document format. Except that it operates at a much higher scale and speed, so you can scrape thousands of sites in a few minutes. The process is explained in our guide on web scraping.

Types of data that can be extracted

Almost any text or number you can see on a public webpage can be scraped. Common examples are product names and prices, customer reviews and ratings, contact information, news headlines, job listings, stock prices, and social media posts.

Why Businesses Perform Web Scraping

Businesses perform web scraping because a lot of critical information exists outside their control, for example, exchange filings, pricing information of competitors, research reports, etc.

For example, an investment firm using outdated exchange filings or financial disclosures can end up making recommendations based on numbers that have already changed, something clients can easily verify, making the firm look careless.

Decisions made with current, accurate data are better than those made with outdated information. And web scraping gives you real-world data automatically and on a schedule.

Scraping is a practical way to quickly gather all that scattered web data and convert it into something you can actually use.

The applications of scraping range from small to enterprise scale.

- A local retailer can use it to keep an eye on competitor pricing.

- A Fortune 500 company can use it to monitor brand mentions on social media.

- A startup can use it to build a prospect list without paying for expensive (and probably outdated) third-party databases.

The underlying value for all the above use cases is the same: faster access to better information.

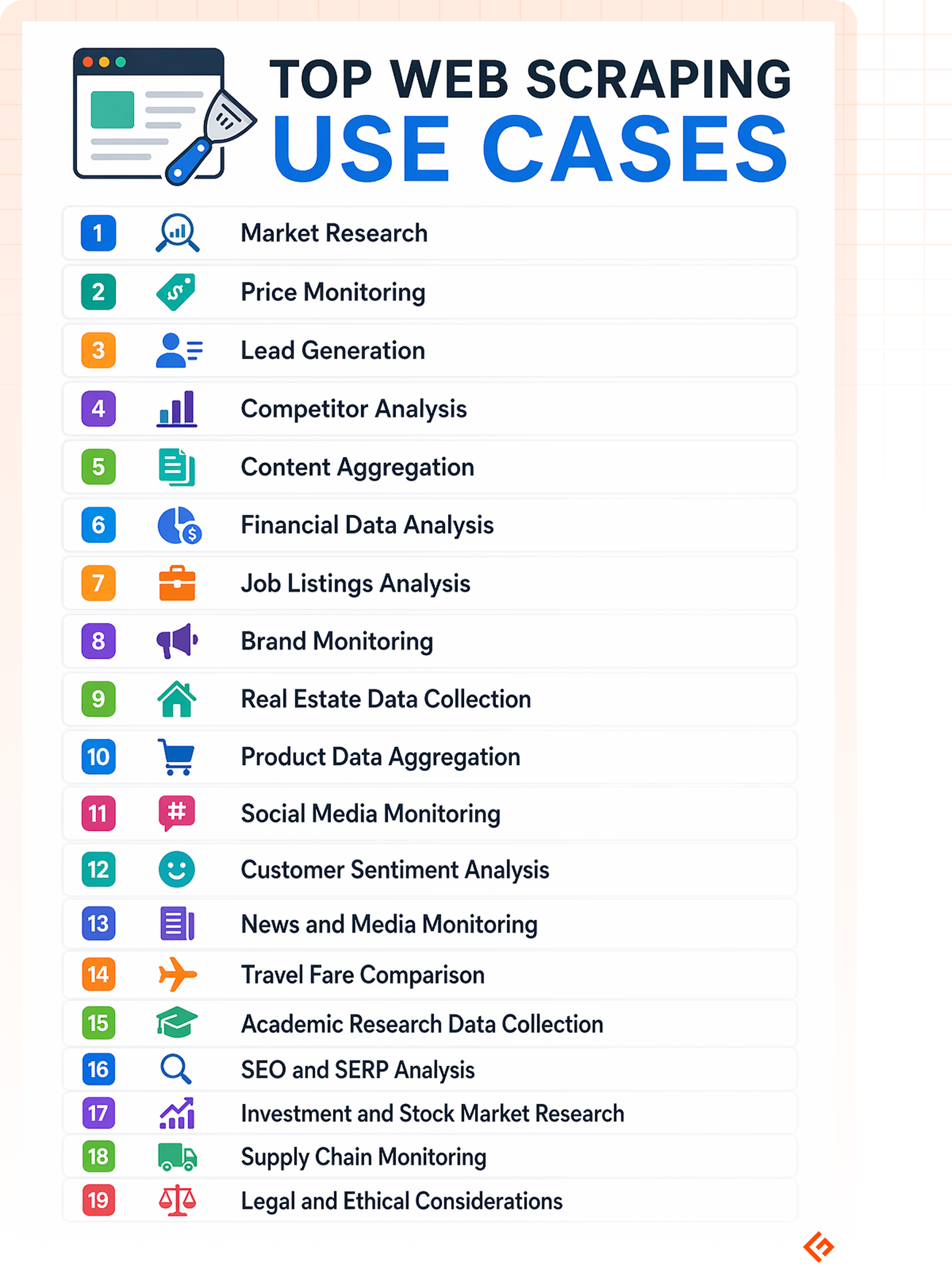

Top Web Scraping Use Cases

We’ll now discuss some common web scraping use cases.

Market Research

Traditional market research is slow and expensive. Surveys take weeks to design and run. Research reports may already be months out of date by the time you buy them.

Web scraping gives businesses a way to gather market data in real time. You can monitor which products customers are talking about, what features they praise or complain about, and which new players are entering your market. All these can be obtained from publicly available sources like review sites, forums, social media, and retailer listings.

This kind of ongoing intelligence is far more useful than an expensive, outdated quarterly report.

Price Monitoring

Pricing is one of the most competitive levers your business has, and it moves constantly. If a competitor drops their price on a key product or service, and you don’t find out for a week, you will lose sales.

E-commerce price scraping lets retailers and brands automatically track competitor prices across any number of websites in real time.

When a price changes, you will know immediately. You can set up dynamic pricing strategies in response to the market. This is already a standard practice among e-commerce sellers, airlines, and hotels.

Lead Generation

Building a list of potential customers is one of the most time-consuming parts of sales. Web scraping automates a significant portion of it.

Scrapers can collect business names, website URLs, email addresses, phone numbers, locations, and other publicly available contact details from directories, LinkedIn, and similar sources.

This is particularly useful for B2B companies targeting specific industries, geographies, or company sizes.

Competitor Analysis

Being aware of your competition is one of the most important factors for staying relevant in the market. Keeping track of competitor websites, product offerings, pricing, and messaging manually is impractical when there are multiple competitors and information changes frequently.

Competitor data scraping automates this monitoring. You can track when a competitor updates their pricing page, adds or removes a product, changes their messaging, or publishes new content. By analyzing this data, you can find gaps and new products you can add.

Content Aggregation

Some businesses are built entirely around aggregating content from multiple sources. For example, job boards, real estate listings, news feeds, and product catalogs. Web scraping makes this possible at scale.

A web scraper collects and refreshes that content automatically. If you’re building a comparison site, a curated news platform, or any product that depends on pulling information from across the web, automated web scraping should be your foundation.

Financial Data Analysis

Finance teams inside businesses need current data to plan accurately, not just for investments but for operations. Raw material costs, shipping rates, currency exchange fluctuations, and competitor revenue disclosures all affect budgeting and forecasting decisions.

Web scraping pulls this data from commodity pricing sites, central bank publications, industry reports, and public financial filings automatically. Instead of an analyst manually checking multiple sources each morning to build a picture of cost exposure or market conditions, that data is collected and ready.

For companies with significant exposure to commodity prices or foreign exchange, like manufacturers, importers, and large retailers, having the latest information improves the quality of financial planning.

Job Listings Analysis

Job postings are a window into what’s happening inside companies. And this is not just for HR teams.

When a business suddenly posts 20 engineering roles focused on AI, that tells you something about their strategic direction. When a competitor stops hiring entirely, that tells you something too.

- Business strategy teams use this competitor hiring data as a signal of business priorities.

- Recruiting firms use it to identify where demand for talent is growing.

- Universities and online learning platforms use this information to design new courses.

- HR teams use job listing scraping to benchmark salaries and understand how their compensation compares to the market.

Brand Monitoring

Your brand name is mentioned in thousands of places online, like review sites, forums, news articles, social media, and blog posts. Knowing what people are saying about your business and responding quickly when something goes wrong is crucial for your business’ reputation. It requires monitoring all of those platforms and ensuring you know what’s happening before others.

Web scraping makes that continuous monitoring possible. Scrapers collect that data automatically and flag relevant mentions for your team to review. This applies to reputation management, PR, and customer experience. Catching a problem early is always better than discovering it after a trolling post goes viral.

Real Estate Data Collection

Real estate moves on timing. Properties come on and off the market, prices adjust, and trends shift.

If your data is even a few days old, you could be analyzing properties that are already sold or pricing deals that no longer exist.

Real estate professionals, investors, and data platforms use web scraping to track listings across multiple sites, monitor price changes, and analyze market trends so that they have the latest data at hand.

Product Data Aggregation

Retailers and brands managing large catalogs need accurate, consistent product data across multiple channels.

Web scraping is used to collect and update product names, descriptions, images, and pricing from supplier sites, marketplaces, and distributor catalogs automatically.

This is important for businesses selling on platforms like Amazon, Google Shopping, or product comparison sites, where data needs to stay accurate and current across every listing.

Social Media Monitoring

Social media platforms are where customers talk openly about products, services, and brands. Scraping insights from social media lets companies track what their customers are actually saying without relying on filtered feedback or surveys that people rarely respond to.

This data helps in product development, marketing, customer service, and competitive intelligence. It’s a direct view of unfiltered public opinion about your brand.

Customer Sentiment Analysis

Reviews and ratings across platforms like Google, Yelp, Trustpilot, and Amazon give businesses a detailed picture of how customers feel about their products and services.

Rather than reading through reviews manually, businesses use web scraping combined with sentiment analysis tools to process large volumes of feedback. These tools track sentiment shifts over time and compare their reputation to competitors. This method is more reliable than asking customers directly.

News and Media Monitoring

A pharmaceutical company needs to know the moment a regulatory decision changes. A financial firm needs to catch an earnings report leak before markets react. A manufacturer needs to know about a raw material shortage before it hits their supply chain.

These aren’t situations where checking Google News once a day is enough. Businesses in fast-moving or heavily regulated industries use web scraping to monitor hundreds of news sources simultaneously and continuously. These include trade publications, government announcement pages, wire services, industry blogs, and mainstream media outlets. This works as an early warning system.

Travel Fare Comparison

Airfare and hotel prices change by the hour based on demand, availability, and competitor pricing. Travel comparison sites are built almost entirely on scraping this data from airline and booking websites in real time.

For businesses managing significant travel budgets, the same approach can be used to monitor price trends and book at the right time.

Academic Research Data Collection

Research projects that require large volumes of real-world data, including social behavior, economic trends, etc., rely on web scraping to collect it. Academic institutions and research organizations scrape data from public websites, government databases, and archives at a scale that would be impossible through manual collection.

SEO and SERP Analysis

Search engine results pages (SERPs) change constantly based on algorithm updates, competitor activity, and content freshness. Businesses use web scraping to track where their pages rank for important keywords, monitor how competitors are performing in search, and identify opportunities to improve. This helps in content strategy and SEO investment decisions.

Investment and Stock Market Research

Investors and fund managers evaluate companies for investment decisions, and the data required goes beyond just stock prices and management commentary. Executive leadership changes, SEC filings, patent applications, supplier relationships, hiring activity, and earnings call language carry signals about where a company is headed.

Web scraping lets analysts pull this information from across multiple sources like regulatory databases, company investor relations pages, financial news sites, and court records. This gives a more complete picture of a company’s health than what shows up in a standard brokerage terminal.

Supply Chain Monitoring

Global supply chains depend on information that changes rapidly. The stakes are higher right now than they have been in years. The US-Israel conflict with Iran has pushed global supply chain pressures to a three-year high as of early 2026. Disruptions at the Strait of Hormuz are driving up freight costs, lengthening delivery times, and causing panic across the world and the Middle East in particular.

Businesses use web scraping to monitor supplier websites, commodity pricing, logistics data, and industry news automatically.

When disruptions happen, teams that are monitoring this data continuously can respond faster than those relying on periodic reports or waiting for suppliers to reach out.

E-commerce Trend Analysis

Consumer preferences shift constantly. New product categories emerge, demand spikes for certain items, and trends fade quickly.

E-commerce businesses use scraping to monitor which products are gaining traction on marketplaces like Amazon, what’s trending in search, and how competitors are adjusting their product mix. This helps in product decisions, inventory planning, and marketing focus.

Legal and Ethical Considerations

Web scraping is widely used and legal in many contexts, but there are boundaries to be respected. Business owners should understand the below considerations before including web scraping in their operations.

Publicly available data vs. protected data: Scraping information that is publicly visible on a website is generally allowed but not the data behind a login or from systems that are explicitly protected. Courts in the United States mostly hold the view that scraping publicly available information is permissible, but this rule continues to evolve.

Website terms of service: Many websites prohibit scraping in their terms of service. Violating these terms doesn’t always carry legal consequences, but it can result in your access being blocked, and in some cases it can create legal issues. It’s worth reviewing the terms of any site you plan to scrape regularly.

Data privacy regulations: Laws like GDPR in Europe and CCPA in California place restrictions on how personal data, including data scraped from public websites and social media profiles, can be collected, stored, and used. If your scraping collects personal information about individuals, these regulations apply.

Responsible use: Sending too many requests to a website in a short period can strain their servers and disrupt the site for other users. Responsible scraping means operating at a reasonable rate and avoiding behavior that increases the load on the sites being accessed.

Conclusion

The web is a massive database. The businesses that come out on top will be the ones that stop trying to read it page-by-page and start automating the way they digest it.

When the Strait of Hormuz sees a shipping delay for a critical component in your car, or a competitor on Amazon silently drops their price at 3 AM. You can’t afford to wait for a report to tell you about it three days later.

By the time you’re reading a news summary or a quarterly trend analysis, the biggest opportunities have usually already passed. Geekflare Scraping API gives you the raw, unfiltered view of what’s happening right now, whether that’s tracking SEC filings for a sudden investment opportunity or monitoring Trustpilot to see why a specific product is suddenly being trolled.

Web scraping has moved from being a nice-to-have feature to being a mandatory business requirement for survival.

Frequently Asked Questions

E-commerce, financial services, real estate, travel, and media are among the heaviest users. However, virtually every business that needs to track competitors, monitor markets, or collect large volumes of public data has a use for it.

Yes. Small businesses often benefit the most because they don’t have large research teams. Scraping lets a small team access competitive intelligence and market data that would otherwise require significant resources to obtain.

Accuracy depends largely on the quality of the scraper and the reliability of the source. Well-built scrapers that are properly maintained produce highly accurate data. The bigger risk is that if a scraper isn’t running regularly, the data it produces could be outdated and useless.

The main risks are legal exposure from violating terms of service or data privacy laws, technical disruptions caused by server overload, and incorrect data being captured if a target website changes its structure.

Not always. Some websites use technical measures to block scrapers, require logins to access content, or serve data in ways that are difficult to extract automatically. Publicly accessible, non-authenticated pages are the easiest to scrape.

Building a scraper from scratch typically requires programming knowledge, most commonly in Python. However, there are also no-code and low-code tools and web scraping APIs like Geekflare scraping API that are pretty easy to use. For larger or more complex projects, most businesses work with developers or third-party scraping service.

It depends on what you’re using the data for. Pricing data for a competitive e-commerce environment or stock market-related information might need to refresh hourly. Job listings monitoring might run daily. Market research aggregation could run weekly. Set the frequency based on how quickly the data changes and how quickly your business needs to respond.

When an API provided by the website/platform is available, it’s generally the preferred option. Native APIs are purpose-built for data access, tend to be more reliable, and are explicitly permitted by the data provider. Web scraping is done when no API exists or when the API doesn’t provide the data you need.