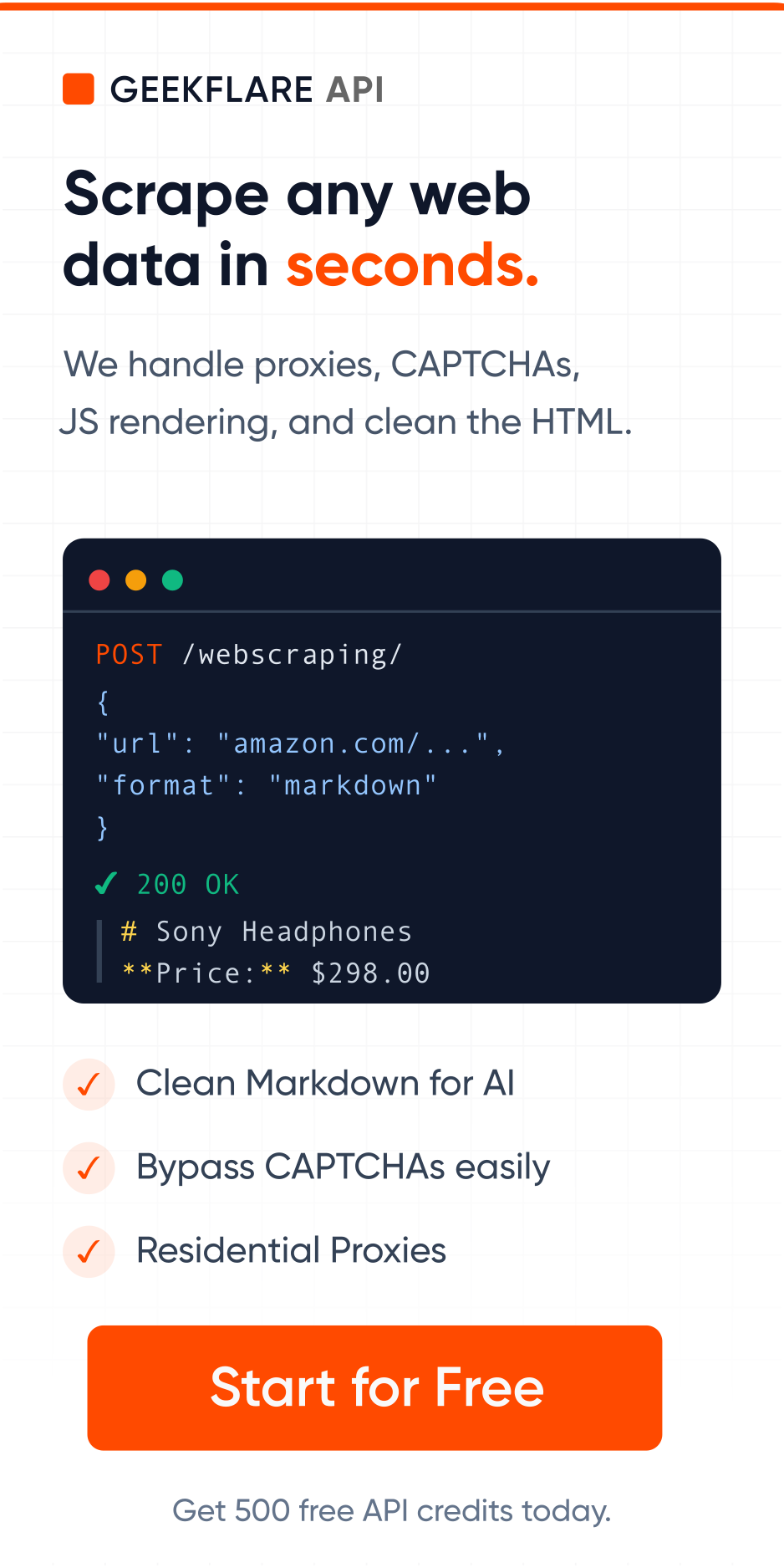

Web scraping and data mining are often used interchangeably when people start working with data. I used to do this often, but not anymore.

After all, most of the data people require is available on the web. So, if you want to ‘mine’ data, you ‘scrape’ the web, right? Actually, no. They’re closely related, but they solve different problems.

First, you need a way to collect the data. That’s where web scraping comes in.

Then you figure out what that data actually means. That’s data mining.

Think of it like fishing versus cooking. One gets the raw ingredients; the other turns them into something useful.

This article walks through both. I’ll discuss what they are, how they differ, and when you would use one or the other.

What is web scraping?

Web scraping is the process of automatically pulling information from websites. Instead of someone manually copying data from a webpage into a spreadsheet, a scraping tool does it automatically. It visits pages, reads the content, and extracts the specific pieces of information you need.

Web Scraping Tool

To scrape any sites into Markdown, JSON, text, and HTML, you can test out our Geekflare Scraping API. You get 500 free credits.

For example:

- Pulling product prices from competitor websites

- Extracting reviews from marketplaces

- Collecting job listings from multiple portals

The data extracted through web scraping is unstructured, with raw text, prices, product names, reviews, contact details, and whatever appears on those pages.

Key Characteristics:

- It targets publicly accessible websites. Anything a browser can load, a scraper can usually read.

- It automates repetitive data collection. Tasks that would take humans hours take seconds when you use a web scraper.

- The output is raw data. It needs cleaning and organizing before it’s truly useful.

- It can run on demand or on a schedule.

- It focuses on data collection, not analysis.

What is data mining?

Data mining is the process of analyzing large datasets to find patterns, trends, correlations, and insights that aren’t immediately obvious. It’s what happens when you dig through the data looking for something meaningful.

It doesn’t care where the data came from. The data could be from your CRM, spreadsheets, logs, or data collected through web scraping.

For example:

- Finding which products sell best in certain regions

- Identifying customer churn patterns

- Detecting fraud or anomalies

Some popular data mining techniques are clustering (grouping similar data), classification (sorting data into categories), and regression (predicting future values from past data).

Key Characteristics:

- Focuses on data analysis and insights

- Uses statistical and machine learning methods

- Works on large datasets

- Outputs are patterns, predictions, or models.

Web Scraping vs. Data Mining: Key Differences

Purpose

The purpose of data mining is discovery, finding patterns and meaning within data you already have.

The purpose of web scraping is collection, that is, getting data from external sources into your system.

Data Sources

Web scraping pulls from external sources like websites, online directories, product listings, social media pages, news articles, etc. The internet is its main source.

Data mining works with your scraped data or pre-existing datasets like your CRM, transaction history, customer database, or any repository of information your organization has accumulated. You can mine data collected through web scraping, but you can also mine data from surveys, sensors, purchase records, or any other source.

Web scraping is only one of the possible sources of data for data mining.

Techniques Used

Web scraping relies on techniques like HTML parsing (reading the structure of a webpage to locate specific elements), CSS selectors, XPath queries, APIs, and browser automation. Some advanced scrapers can handle JavaScript-heavy sites or bypass anti-scraping measures.

Data mining techniques are statistical and algorithmic, like machine learning models, decision trees, neural networks, and clustering algorithms. These are the tools of data science, not web development.

Output and Results

Web scraping outputs raw data, like a spreadsheet with prices, a list of email addresses, a table of product descriptions, etc.

Data mining outputs include insights, like a customer segment you didn’t know existed, a churn risk score for each subscriber, a product recommendation, qualified leads from the list of random email addresses, etc. It’s intelligence derived from patterns in the data.

Data Type

Web data scraping handles unstructured or semi-structured data like text on pages, prices in inconsistent formats, and reviews of varying lengths. It collects what’s there and leaves the organizing to the user.

Data mining works best with structured data organized in rows and columns with consistent formats. If your data is messy, you’ll need to clean it up before any meaningful mining can happen.

Process Complexity

Web scraping can range from simple to tricky and complex. Scraping a basic HTML page with a list of names and phone numbers is relatively easy. But scraping a site that loads content dynamically, requires login, changes its page structure, or actively blocks bots is a complex challenge.

Data mining is usually complex. It requires statistical knowledge, understanding of the business context, model selection, and careful interpretation of results.

Tools Used

Common web scraping tools include Python libraries like BeautifulSoup and Scrapy, browser automation tools like Playwright and Selenium, APIs like Geekflare API, and commercial data scraping tools like Apify, Octoparse, or Bright Data.

Data collection tools for mining include Python’s pandas and scikit-learn libraries, R, Apache Spark for big data mining, and platforms like RapidMiner, KNIME, or enterprise tools like Tableau and Power BI for the visualization part. The complexity of the task determines which tool to use.

Level of Analysis

Web scraping involves zero analysis. It doesn’t interpret anything. It fetches what’s there on the web. The intelligence is in where you point it and what you ask it to collect.

Data mining is pure analysis. It doesn’t fetch or generate new data. It looks at the existing data and finds patterns. The intelligence is in how you frame your questions and how you build your models.

Common Use Cases

Web scraping is commonly used for:

- Competitor price monitoring

- Lead generation (collecting contact information from directories)

- Market research (gathering product reviews, news mentions, job listings)

- Real estate data collection (aggregating listings from multiple sites)

- Content aggregation

Data mining is commonly used for:

- Customer segmentation and targeting

- Fraud detection

- Churn prediction

- Product recommendation engines

- Supply chain optimization

- Risk assessment in finance and insurance

Skill Requirements

Web scraping requires knowledge of web technologies like HTML, CSS, HTTP requests and usually some programming ability, most commonly Python. For complex scraping projects, you will need software development skills. There are also many no-code tools that can serve the purpose if you are not a developer.

Data mining requires a background in statistics, machine learning, and domain knowledge to interpret findings correctly. Understanding what a pattern actually means for your business requires experience and judgment.

I’ve summarized the differences in the below table.

| Web Scraping | Data Mining | |

|---|---|---|

| Purpose | To collect data | To find insights |

| Sources of Data | External sources (web) | Internal datasets |

| Technology | HTML, APIs, automation | ML, stats, algorithms |

| Output and Results | Raw data output | Insightful results |

| Quality | Unstructured / messy | Structured / clean |

| Complexity | Simple to complex | Mostly complex |

| Tools Used | Scrapy, Selenium, APIs | Pandas, ML, BI tools |

| Level of Analysis | No analysis | Pure analysis |

| Common Use Cases | Price tracking, leads | Segmentation, prediction |

| Skills Required | Web + coding skills | Stats + domain knowledge |

How Web Scraping and Data Mining Work Together

In real projects, web scraping and data mining almost always go hand in hand.

A typical flow looks like this:

- Use web scraping to collect data

- Clean and structure the data

- Apply data mining techniques to extract insights

- Use those insights for decisions

Example:

- Scrape competitor pricing data

- Analyze pricing trends using data mining

- Adjust your pricing strategy

Without scraping, you don’t have external data. Without mining, that data just sits there.

When to Use Web Scraping vs. Data Mining

Use web scraping when:

- You don’t have the data yet

- The data exists on websites

- You need continuous data collection.

Use data mining when:

- You already have data

- You want insights or predictions

- You need to support business decisions.

Use both when:

You want to build data-driven systems (pricing engines, dashboards, AI models)

Conclusion

Web scraping and data mining solve two different but connected problems.

- Web scraping = data extraction from the web.

- Data mining = data analysis for insights.

FAQs

No. They are related but separate activities. Web scraping is a method of data extraction, while data mining is a method of data analysis. Scraping is one possible way to build the dataset that data mining works on.

Yes, and it is done regularly. Web scraping is one of the most common ways to build large, labeled datasets for training machine learning models, particularly in natural language processing, where models are trained on text scraped from the web. However, the data needs cleaning and structuring before it’s used for training.

Retail, finance, healthcare, telecom, and marketing rely heavily on data mining for decision-making. Retailers use it for recommendation engines and inventory forecasting. Banks use it for credit scoring and fraud detection. Healthcare organizations use it to identify at-risk patients. The telecom industry uses it to spot usage patterns and spam detection.

Web scraping: Not always (many no-code tools exist)

Data mining: Usually yes, especially for advanced analysis

Structured data (databases), semi-structured data (logs, JSON), and even text data can be analyzed using data mining techniques.

Yes, and many businesses do it. A common workflow involves scraping external data, like competitor prices, market trends, and consumer sentiment, and then combining it with internal data and running mining processes across the combined dataset.

For web scraping, the main concerns involve respecting a website’s terms of service (many explicitly prohibit scraping), avoiding excessive server load, and being careful with personal data collected incidentally. Privacy regulations like GDPR affect what you can do with scraped data involving individuals.

For data mining, the concerns exist on data privacy, algorithmic bias (where models replicate or amplify unfair patterns in historical data), and transparency about how automated decisions are made.

Start with:

– Basic statistics

– Excel or SQL

– Python or R for data analysis

You also need to understand how to interpret results, not just generate them.